This is a collection of reflections from travels and site visits, a notebook in motion. These entries sit somewhere between essay and field journal, capturing fleeting moments of observation, thought and process.

ai anthropology art history cambridge england futurism Hannah McBeth mormonism museums philosophy Salt Lake City utah

-

No Man Knows Rejection

Fawn McKay Brodie (1915–1981) was a Mormon-born historian from Utah best known for writing No Man Knows My History in 1945, one of the first major biographies of Joseph Smith. Brodie came from a prominent LDS family, allowing her access to restricted and controversial primary sources about Smith’s life. The work was enormously controversial within Mormonism and led to her excommunication from the LDS Church in 1946. In the 1990s, reading her book was grounds for formal religious discipline.

The title No Man Knows My History came from a remark by Smith himself. Brodie used the phrase ironically, positioning the book as an attempt to reconstruct the human, political and psychological dimensions of Smith’s life outside devotional mythology. Although the average Mormon opinion is this biography denigrates Smith, if you read it, it actually treats him more as a charismatic and improvisational religious leader than a villain in any sense.

Brodie later wrote books on Thomas Jefferson, Richard Nixon and others. She married Bernard Brodie (1910–1978), a prominent Jewish-American military strategist from Chicago often associated with early nuclear deterrence theory and RAND-era Cold War strategy. Their marriage itself represented a significant departure from the expectations of elite Mormon social life at the time.

Hugh Nibley (1910–2005), a classics professor and Mormon apologist at Brigham Young University, wrote a famously dismissive 1946 response titled No, Ma’am, That’s Not History.* One of the twentieth century’s most influential defenders of Mormonism, Nibley wrote about apostasy in intensely personal terms, often treating ex-Mormons less as intellectual dissenters than as psychologically wounded or morally compromised figures.

His rhetoric toward women frequently slipped into the language of bitterness, betrayal and romantic spite, to the point that it became its own Mormon subgenre: families sitting around living rooms discussing a female relative who had committed the grave spiritual error of becoming too educated. (Cue annoying American laugh track.)

Mocking intelligent or unusual people is a favorite Mormon AND American pastime.

Mocking intelligent or unusual people is a favorite Mormon AND American pastime.

Be kind, or else

After decades of trying not to think about Fawn Brodie and Hugh Nibley, the current cultural moment has brought my attention back to a social pattern I began to understand when I was an “over-educated” teenage girl. There is a learned call-and-response behavior instructing Mormons about how to deal with women who are intellectually difficult or publicly skeptical. Mocking, “negging” and the family laugh track are part of it.

There’s really no other way to describe it than emotional-abuse conditioning, and you see it on the internet on a daily basis too. I’m so autistic, guys, I’ve actually read B.F. Skinner and the Stanford Prison Experiments. *Shrugs in cute autistic girl*

Not just girls: overeducated sons, theater kids, stunt performers and EDM fans have long occupied the role of “family disappointment who moved to California.” Gideon Gemstone from the TV show, Righteous Gemstones.

Not just girls: overeducated sons, theater kids, stunt performers and EDM fans have long occupied the role of “family disappointment who moved to California.” Gideon Gemstone from the TV show, Righteous Gemstones.What makes the broader “be kind” culture from the pandemic onward feel so familiar is the way it functions as a pacifying mechanism designed to shut down legitimate conversation, let alone “debate” which is too “verbally violent” for people raised in the Disney-Pixar bubble; this creed blends vague therapeutic language with the authority of Jesus’ Beatitudes and the 1990s grandpa-joke vibe of Prophet Gordon B. Hinckley. Please use his literal title, guys.

A classic Mormon read from 2002. One of Prophet Hinckley’s maxims is: be kind. How this was reused in Democratic political language from 2020 to the present day remains something of a mystery.

A classic Mormon read from 2002. One of Prophet Hinckley’s maxims is: be kind. How this was reused in Democratic political language from 2020 to the present day remains something of a mystery.The result is a culture where disagreement can twist into concern about tone or emotional stability, and criticism of ideas gradually collapses into criticism of character, but usually in the form of backbiting and gossip. For women especially, ridicule arrives disguised as pity or quiet suspicion that something has gone wrong within the person herself.

Part of what made the reaction to Brodie so culturally influential was the way it formalized this emotional script at an intellectual level. Hugh Nibley did not simply challenge her arguments; the broader response recast her seriousness itself as faintly absurd. Over time, that pattern reproduced itself socially, making certain forms of female dissent feel not just incorrect, but embarrassing, unfeminine and morally suspect.

The older I get, the less convincing I find the modern insistence that criticism itself is a form of violence. Growing up Mormon made me unusually sensitive to the gap between publicly performed niceness and private systems of punishment, particularly in environments where emotional harmony matters more than honest disagreement. Today, though, this passive aggression no longer feels confined to Mormon suburbia; it’s crossed into being an American default setting.

I have little interest in participating in a culture where socially inconvenient observations are treated as a greater moral offense than manipulative or coercive behavior. And what justifies the now-familiar rituals of public shaming, livelihood destruction and TikTok mob exorcisms? As a cultural writer, if telling the truth occasionally requires being less “nice,” if it requires expressing thoughts and feelings now classifies as “unkind thoughtcrime,” then I won’t participate in your increasingly strange social experiment-cum-authoritarianism. Sorry, not sorry.**

The eternal family as social operating system

In the moment we finally possess the analytical tools to ask much deeper questions about how cultures actually function, it seems ironic that cultural critique and debate are stifled with the “be kind” maxim. Today, we can examine how somewhat indescribable groups like Mormons or the LDS differ from other Christian cultures, how particular social incentives shape behavior over time, and how communities quietly reproduce values they rarely articulate directly.

When family is understood as eternal and community becomes the primary — and, ahem, rather materialistic — metric by which a “life well led” is measured, rejection itself can become impossible to understand. The men who run this religious machine are, in many cases, individuals who have not experienced meaningful rejection — romantically or otherwise — since high school. They married young, stayed embedded within the same social structure and continued accumulating spiritual, familial and social legitimacy simultaneously. So, many Mormon men have never heard the word “no,” from a woman, and if you question them (at any age), they will treat you like a naughty child due to the aforementioned Brodie conditioning.

The problem is that assumed social permanence stabilizes hierarchy to a stifling degree. The person at the top of the family structure is rarely meaningfully challenged because the structure itself treats the family unit as spiritually fixed and morally sacred. Even neglectful or emotionally absent fathers can retain their elevated status indefinitely, while lower-ranking family members are expected to absorb disappointment and continue performing cohesion. In my own social strata, children were often treated as effectively independent the moment they turned eighteen — emotionally, financially and otherwise — yet the symbolic authority of the patriarch remained intact regardless of how much practical responsibility he had actually assumed.

Ironically, this produces a worldview in which rejecting people, mainly men, feels not merely unpleasant, but the ultimate taboo — unless, of course, they smoke, drink alcohol, or commit some other dietary faux pas. The genuinely disturbing things are often hushed up and, somehow, considered worthy of redemption after lengthy repentance protocols. As you can see, there is logic operating and it is impacting culture, but at its base is a total lack of consistent morality.

“Be kind” and sexuality: what the prudish veneer is covering up

“Families Are Forever” is not metaphorical branding. It is an enforced and materialistic theology. Once born and sealed into a lineage of parents, siblings, grandparents and dead relatives stretching backward indefinitely, you can never fully escape — ahem — I mean, you will never be alone. Ahem — I mean, they will always be “watching over you.” In the case of my god-fearing relatives whose faith inspired me (the generation that’s already passed away), this is comforting. The idea the Mormon FBI, who will inevitably consider me their “cute autistic sister who needs to be punished,” can read my texts or even surveil every purchase that goes through my bank account without a warrant? Not so much…

Mormonism is widely perceived as prudish, but prudishness and sexual obsession are not opposites. Beneath the sanitized image of smiling families and enforced modesty is a religious history built around patriarchal access to girls and the collapsing of healthy personal boundaries into “obedience.” At a certain point it becomes difficult to ignore the degree to which Mormon culture has spent generations trying to normalize dynamics that, outside its theological framing, most people would immediately recognize as predatory or incestuous.

The intelligence apparatus in the United States recruits prolifically within Mormonism, which is one theory I have about why the irritating qualities of my birth-culture seem to be creeping into every facet of American life. The FBI, for example, has absorbed the passive aggression, the “friendly and joking” sexism and even the tech and data products coming out of Mormon communities themselves.

From this unholy alliance is metastasizing a normalization of voyeurism and total destruction of personal privacy: the logical end of passive aggressive forms of control by a homogenous group of over-powered and infrequently questioned men. I also suspect Mormon intelligence is at the base of the heavy dose of propaganda and social engineering to define “normal sexuality” on the internet, with “be kind” as its guiding philosophy.

When your creepy Mormon uncles are on top of the power hierarchy — of the religion, of the government and of the intelligence apparatus, we get a heavy dose of social media and pornography strategy bent towards normalizing whatever the homogenous male power structure deems to be “natural sexual interests.” For traditional heterosexual men who’ve now accepted pornography as part of their daily life, incest and pedophilia can begin to look like part of the Mormon heritage that a “kind” culture is now ready to accept.

This helps explain why Mormonism, despite its reputation for conservatism, has produced such a strong liberal Democrat and LGBTQIA-affirming strain within the church and especially among educated ex-Mormons. In many ways, the progressive Mormon instinct is simply the theology of eternal belonging translated into therapeutic modern language. The conservatives may retain stricter doctrinal positions on sexuality and marriage (although many Mormon men secretly believe incestuous or pedophilic tendencies are perfectly normal), but the liberals arguably won the cultural and PR battle because the religion already contained a deeply embedded emotional logic that framed exclusion itself as suspect. “Families Are Forever” turns out to blend quite naturally with contemporary ideologies growing alongside social media and the surveillance state.

In this vein, perhaps the most striking doctrinal overlap between Mormonism and aspects of the LGBTQIA+ movement is the belief that gender, like eternal family roles, originates in the “Pre-Existence.” Many Democrat Mormons I’ve spent a lot of time with find the idea that children can be “born in the wrong body” as a deep validation of the concept of an eternally gendered soul.

In this framework, just as a person might never find their “preordained” spouse on Earth and must wait for that relationship to be corrected in the afterlife, gender dysphoria can also be interpreted as a mortal trial: a mismatch between the eternal soul and the physical body that God will eventually resolve. The resolution, of course, is still imagined as contingent on righteous living, obedience, and participation in church culture — including the familiar rituals of community performance, right down to how many times you’ve brought Krispy Kreme donuts to ward functions.

Women get bouncers and stipends

Women in Mormonism occupy a more complicated position than the outside caricature allows. At the upper levels of what you might call “High Mormonism” — parallel to High Anglicanism — the atmosphere can resemble a Jane Austen adaptation filtered through suburban America and venture capital. Marriage is not merely romantic or religious. It’s financial, dynastic and infrastructural.

Old Mormon families operate through interlocking networks of capital, reputation and institutional influence, with certain surnames carrying weight across Utah business, politics, philanthropy and real estate. Salt Lake City is dotted with the naming rights of Mormon dynasties — the Eccles family being one example — and social legitimacy circulates through marriage, kinship and church proximity in ways outsiders often underestimate.

Within this structure, women are protected in exchange for performing the feminine role the system rewards: attractiveness, likability, fertility, emotional management and social cohesion. The rewards can be substantial. Houses appear. Investments materialize around marriages and children. Women receive status, childcare support, community infrastructure and a form of soft social security through the family network itself.

The result is that many wealthy liberal Mormon women move through life encountering little disagreement or confrontation. Open conflict threatens the emotional atmosphere, and the emotional atmosphere is one of the religion’s primary products. So the “edgy” conversations remain within upper-middle-class Mormon consensus: why single women in their thirties with cats are bleak but somehow validating, why Satan is destroying America through drugs and IPAs, and other manageable anxieties that provide smugness but don’t disrupt the “good vibes.”

Eternal families, eternal networks

The hierarchy produced is not just religious but informational. A culture built around eternal family structures, reputational management and tightly interdependent community life develops a natural interest in surveillance, record-keeping and systems of mutual observation. Mormonism has always been unusually administrative in this regard: genealogies meticulously tracked, relationships formalized, membership monitored and social standing woven directly into both earthly and Heavenly legitimacy. In a system where family cohesion and public morality function as forms of spiritual capital, visibility itself becomes culturally important.

Part of what makes Utah culturally fascinating, then, is how naturally this worldview intersected with the rise of networked computing and early internet infrastructure. The University of Utah played a foundational role in ARPANET-era computing research, while old Mormon family networks became deeply embedded in banking, telecommunications, software and institutional infrastructure throughout the American West. The same culture that emphasized interlocking families, centralized records and coordinated community management also proved unusually comfortable building systems organized around information flow, visibility and long-term institutional continuity.

Once you notice the overlap, the whole thing begins to feel less accidental. A religion organized around eternal connection, hierarchical networks and permanent social legibility entered the internet age unusually well prepared for it. Many of the same families and institutional structures that shaped Utah’s religious and financial culture also helped shape parts of the software and technological infrastructure underlying the modern West.

The hierarchy, in other words, reproduced itself through new mediums without fundamentally changing its underlying logic: the pervasive, faintly passive-aggressive conviction that a sufficiently regimented family structure can produce a kind of surveillance-based Heaven on Earth.

Escaping the bitter ex-Mormon stereotype with total disinterest

Brodie’s biography may have fueled speculation — and eventual confirmation — surrounding Joseph Smith and polygamy, but there were many more destabilizing or politically explosive episodes in early Mormonism she could have explored but didn’t. As much as there remains outrage surrounding her book about Smith, to me it still reads as celebratory, not unlike literary critic Harold Bloom (1930–2019) of Yale calling Smith a “literary genius” in The American Religion: The Emergence of the Post-Christian Nation (1992).

At the same time, the backlash against Brodie also helped produce a recognizable anti-progressive or liberal Mormon woman archetype: intellectually questioning, culturally sophisticated, suspicious of patriarchal authority, but still orbiting the Church and defining herself in relation to it. For years, that strain has attracted Mormon and ex-Mormon women trying to decode the culture they were raised inside.

The anti-feminist Mormon woman archetype created by the bickering between Brodie and Nibley has its uses. Maybe the gradual introduction and eventual “acceptance” of polygamy’s modern descendants was part of a planned and now coordinated effort to steer a certain valuable American demographic toward specific beliefs and behaviors, through aligned interests, institutional incentives, media narratives and social pressures that move in the same direction while presenting themselves as organic cultural evolution.

Hey, the Trump camp converted me into someone who accepts “fake news,” and I will probably never go back to my view of American culture or media again, much like Mormonism. After years of watching so many highly contrived narratives synchronize across journalism, academia, corporations, politics and social media, I no longer trust that social change emerges naturally or independently. All narratives start to look managed and negotiated between interests behind the scenes.

Another question remains: Why are there no surviving photographs of “Joseph Smith,” supposedly a living prophet who existed right alongside the emergence of photography? Why does his story resemble so many other prophetic archetypes to a T? And how exactly does Mormonism connect to the nineteenth-century Freemasonry interest in constructing an indigenous religion for America?

* Cringe…

** I said cum in a cultural critique essay… lol. -

Every Generation Gets the Eating Disorder It Deserves

In The Invention of Lying, Ricky Gervais plays a man living in a world where nobody ever evolved the ability to lie, a premise that shapes every part of the movie’s universe. Like all good speculative fiction, the film commits to the bit: conversations feel like brutally honest Yelp reviews, people casually tell dates they’re unattractive like they’re commenting on traffic, and television seems to consist almost entirely of depressing historical lectures.

For about twenty minutes, it is one of the funniest premises imaginable. What makes the concept work is that the hypothetical world only feels convincing because the underlying logic feels plausible. Gradually, the premise starts revealing something much stranger underneath. In this universe, saying something comforting instead of brutally factual would be treated almost like fraud. The people in The Invention of Lying seem to be suppressing a constant stream of humiliating observations, while civilization exists mainly as a giant conspiracy to prevent them from saying these things out loud.

The premise only really makes sense if you accept the movie’s deeper assumption that politeness, tact, romance and social grace are fundamentally forms of dishonesty rather than fragile cultural achievements.

A movie that could launch a thousand uncomfortable conversations.

A movie that could launch a thousand uncomfortable conversations.Part of what makes the movie funny is that it captures a real cultural shift born from early internet forum culture, where mocking and inverting ordinary social norms felt rebellious, clarifying and somehow more honest than everyday life. Online, the rude interpretation gained prestige because it violated polite consensus. The internet promised access to whatever society suppressed: pornography, piracy, fringe politics, anti-social thoughts, humiliating desires. What previous generations concealed out of shame or discretion suddenly appeared online with the force of revelation. Beneath civilization’s soft performances, internet culture insisted, lurked a darker and more brutally honest reality.

The Invention of Lying quietly absorbs this worldview without fully questioning it. The movie assumes the harshest interpretation of reality is also the truest one: cruel intrusive thoughts become honesty, cynicism becomes wisdom, romance becomes delusion. Politeness and emotional protection are treated as embarrassing lies people tell themselves to avoid confronting status, money, sex and self-interest.

But why should inversion automatically count as truth? The internet trained an entire generation to associate transgression with authenticity because online culture developed largely in opposition to institutional authority. Sometimes that exposed hypocrisy or created space for marginalized identities and dissenting ideas. But over time, especially in the United States, that mindset hardened into something closer to a worldview: what I’ve started calling Materialistic Utilitarianism. The Invention of Lying turns out to be one of the clearest portraits not just of that worldview, but what it produces in people.

The Prosperity Gospel of Abs

By the time I returned to Utah in 2019 after years living in France and the UK, I already felt I had crossed some invisible civilizational fault line. Since then, I’ve found myself trying to understand the strange bipolarity of Utah culture, especially the ex-Mormon dating scene, which often feels less like a rejection of Mormonism than its distorted mirror image. When my much younger, gym-obsessed ex-boyfriend showed me The Invention of Lying in 2024, my visceral disgust clarified something I had been struggling to articulate for years. It also exposed something I recognized in myself, dating a Gen Z boyfriend with a nasty case of body dysmorphia.

At one point, he was taking steroids after apparently consulting ChatGPT for fitness advice while simultaneously treating alcohol, especially beer and wine, with total disgust. A glass of wine at dinner was framed as bodily sabotage; beer became symbolic of laziness and decline. The contradiction fascinated me: synthetic hormones injected in pursuit of aesthetic perfection registered as rational self-improvement, while wine with pasta bordered on moral collapse.

That mentality feels especially intense in Utah, where alcohol rarely exists as something neutral or ordinary. Even after raising grocery-store beer from 3.2% to 5%, the state still maintains the strictest DUI threshold in the country at 0.05%, and alcohol remains wrapped in a culture of regulation, purity and supervision. What increasingly unsettled me, though, was how easily this merged with the hyper-optimized logic of internet culture and modern dating discourse.

When I asked him what felt “authentic” about the movie, he answered immediately: “Everything.” That, he explained, was more or less how he actually saw the world. So I asked the obvious follow-up question. If that worldview were really true, would he immediately trade me in for one of the surgically optimized Utah Valley women described, without irony, as “the top of the genetic food chain”?

“Well, not exactly,” he said.

He explained that our shared experiences together — including, very bluntly, some of the best sexual experiences of his life — created a kind of internal ranking system in his mind. Those experiences raised my overall “score” enough that whatever physical flaws or “deformities” he perceived in me could still pass some threshold of desirability. Listening to him felt a little like sitting through a quarterly performance review conducted by a Tinder algorithm that had recently discovered evolutionary psychology.

Marcel Proust and the radical act of wasting time

When I went to Europe the first time during a high school trip in 2005, I couldn’t help noticing that people lingered in cafés debating books, philosophy, music and politics. Thinkers were admired like celebrities, and museums displayed artists’ belongings like religious relics. The low-level depression and isolation I had carried for years seemed to dissolve. From that point on, I became convinced I had been born on the wrong continent.

Me in a cafe at 15

Me in a cafe at 15A few years later, armed with a Eurail pass and a head full of Before Sunrise, I joined the international backpacker-Couchsurfing community (“Couch Surfers™: we put the ‘cult’ in cultural exchange”). I drifted through Paris, Barcelona, Rome and Athens, where spending three hours in a hostel kitchen debating philosophy with strangers somehow counted as a normal evening rather than evidence you were unemployable.

At 2am in Athens, I once climbed onto a massive boulder overlooking the Acropolis with a South African girl named Tecla, passing cheap bottles of wine back and forth while she explained her obsession with classical mosaics made from tiny colored stones called tesserae. She loved the faint echo between her name and the word, even though the connection was more poetic than linguistic: Tecla came from the Greek Thekla, associated with divine glory, while tessera derived from tessares, meaning four, after the small four-sided tiles used in mosaics. She liked the accidental resemblance anyway, which felt very characteristic of the kind of people one meets at 2am in Athens discussing linguistics instead of measurable goals.

Athens, 2010; I may never be this happy again…

Athens, 2010; I may never be this happy again…It remains one of those strangely treasured memories that appear to serve no practical purpose whatsoever. I still loosely follow her on Instagram, but the real magic arrives whenever I see some news story about a newly uncovered mosaic in Herculaneum. For a moment, I remember her, remember that perfect night in Athens and catch myself smiling off into space, wasting time again.

Proust’s interior world in In Search of Lost Time (1913–1927), with its obsession with memory, aristocratic decay, undercurrents of homosexuality and almost supernatural sensitivity to social nuance, more or less encapsulates the “not very American.” Nothing happens for hundreds of pages except perception itself. People sit in drawing rooms decoding glances, misremembering conversations and psychologically disintegrating over seating arrangements. The entire project is radically anti-utilitarian.

France treats Proust less like an embarrassing overeducated niche interest than part of the national inheritance. Intellectual life there still retains traces of public prestige in a way that feels almost incomprehensible within modern American culture, where intelligence is often expected to justify itself through market value, productivity or technological application. Reading difficult literature in public in the United States can sometimes feel faintly transgressive, as though you are visibly failing to monetize your own consciousness correctly.

A postcard from La Croix Rouge in Fourqueux

A postcard from La Croix Rouge in FourqueuxBack in Fourqueux in 2018, I shared a love of French philosophers and thinkers with my French boyfriend, his elderly mother and their retired neighbors, who spoke no English and had little connection to modern American culture (I was in Heaven, truth be told). Abraham and I eventually agreed that Proust was too mainstream to function as a true marker of intellectual seriousness. Instead, I picked up Stéphane Mallarmé and studied Guy de Maupassant while trying my hand at Gothic short stories in my favorite neighborhood café, La Croix Rouge.

The modern La Croix Rouge, where I spent long afternoons reading and writing.

The modern La Croix Rouge, where I spent long afternoons reading and writing.What would Ricky Gervais’s character say about me reading Hemingway in a French café that has barely changed since the Second World War?

- “Are you actually reading that or just trying to look intelligent?”

- “You know the book could’ve been, like, 900 pages shorter.”

- “This café has been here since World War II? Why hasn’t somebody turned it into luxury apartments yet?”

- “You sat here talking about philosophy for five hours and nobody made any money?”

- “You paid for a ridiculously tiny espresso in a porcelain cup when you could’ve had an XXL soda with twenty flavors?”

The jokes work because most Americans immediately recognize the type being mocked: the overeducated pseudo-intellectual lingering in cafés performing aesthetic sensitivity instead of participating in the economy correctly. “French culture versus American culture” is basically its own comedy genre at this point, but beneath the jokes sits a real cultural divide about utility, intellect and the growing pressure to justify human activity in practical terms. Cue annoying American laugh track:

Q: What is it called it when you take someone, put them in a middle of a room and cue a large group of strangers to mock them? A: Los Angeles and New York City

Q: What is it called it when you take someone, put them in a middle of a room and cue a large group of strangers to mock them? A: Los Angeles and New York CityThe Swamp of Sadness in The Neverending Story

One of the things that saddens me most about the past few years is how easily everything around you begins absorbing the logic of Materialistic Utilitarianism once you start living inside it long enough. The worldview does not remain confined to dating apps, podcasts or ironic internet subcultures. It starts colonizing ordinary emotional life. The optimization fetish my ex carried into every area of existence — body composition, productivity, status, self-improvement, emotional detachment — made it impossible for me to believe he genuinely cared about me. Everything felt provisional or ranked. Affection felt algorithmic, as though love had quietly been replaced by a constantly updating performance metric, which as an “older woman” made me more irrelevant and replaceable, ironically, the more time we spent together.

One afternoon, sitting in a café, for a few seconds, I was pulled backward into memory: pre-social-media childhood, quiet afternoons before life became quantified, then, sitting with Abraham and his mother in Fourqueux discussing literature while the afternoon dissolved outside the window. The moment felt soft, irrational, almost offensively sincere.

Then, I looked across the table at my ex-Mormon boyfriend and heard myself say, “I think our relationship has been about a 4/10, if I’m being brutally honest.”

Leave a Reply Cancel reply

Dimensional Models & Human Perception Through Time

Eduard Manet, “Nanny and Child” (1877–78)

Eduard Manet, “Nanny and Child” (1877–78)At a Crossroads

In 2017, I was living in a small village called Fourqueux outside Saint-Germain-en-Laye, caring for a “jeune fille” while her mother worked for an international company in La Défense, a business district on the outskirts of Paris. I was scraping by, paid 100€ a week with free access to the refrigerator. I wrote on buses, in a tiny attic bedroom at night and for paid media outfits in small regional newspapers in the US. My boyfriend, Abraham — I used to love to annoy him by singing Bach — lived farther down the river in the village of Le Pecq.

On the Impressionists’ Trail between Le Pecq and Fourqueux

On the Impressionists’ Trail between Le Pecq and FourqueuxWe’d met starting our MPhils at University of Cambridge in 2013. We used to roam the town looking for deserted spaces to study, wandering nearly empty buildings late at night. We curled up with our computers in the Archaeology and Anthropology Library, chair storage at the Cambridge Union, or other rooms that never seemed to be locked when we arrived. Sometimes it felt as though, when my fingers closed around doorknobs, another version of the night opened where we could go anywhere our hearts desired. Abraham, part of an old French family, was appropriately respectful of boundaries. He’d complain, but would eventually follow me inside whatever dark room, laughing that a random American seemed to possess an influence over the material realities of Cambridge.

The Cambridge Union Bar and storage room were a couple favorite places for revising; competition for quiet space and electrical outlets becomes fierce during exams.

The Cambridge Union Bar and storage room were a couple favorite places for revising; competition for quiet space and electrical outlets becomes fierce during exams.By 2018, Abraham was applying for a DPhil in Political Philosophy and the History of Ideas, and I helped edit his application while we spent our free time talking about accelerationism and epistemology, the possibility that machine learning might extend or reorganize human perception. We had planned, more or less, to return to Cambridge together if he were accepted. Instead, I unexpectedly received an job offer at a technology research and consulting company next to the Cambridge University Botanic Garden. I found a room in a shared house on Bermuda Road, near a graveyard up Castle Hill, and left for England ahead of him.

My days became saturated with analyst reports and conferences on automation and early enterprise applications of artificial intelligence. At the same time, I was carrying around dog-eared copies of Jorge Luis Borges and Ernest Hemingway. The emerging discourse around machine learning reopened many of the same questions that had first drawn me toward hidden order, unrealized possibility and the strange architectures through which human beings organize perception and generate meaning.

Forking paths and unrealized worlds

In several short stories in Ficciones, Jorge Luis Borges describes time as a branching structure in which multiple outcomes coexist, though only one is experienced at any given moment. A narrative unfolds in a single line of text snaking back and forth across the page, but it gestures toward a system in which that particular assemblage of words, spaces, and punctuation is only one of many possible outcomes. In “The Library of Babel” (1941), the possible organizations of text in an infinite library expand into a metaphor for the ordering of reality itself.

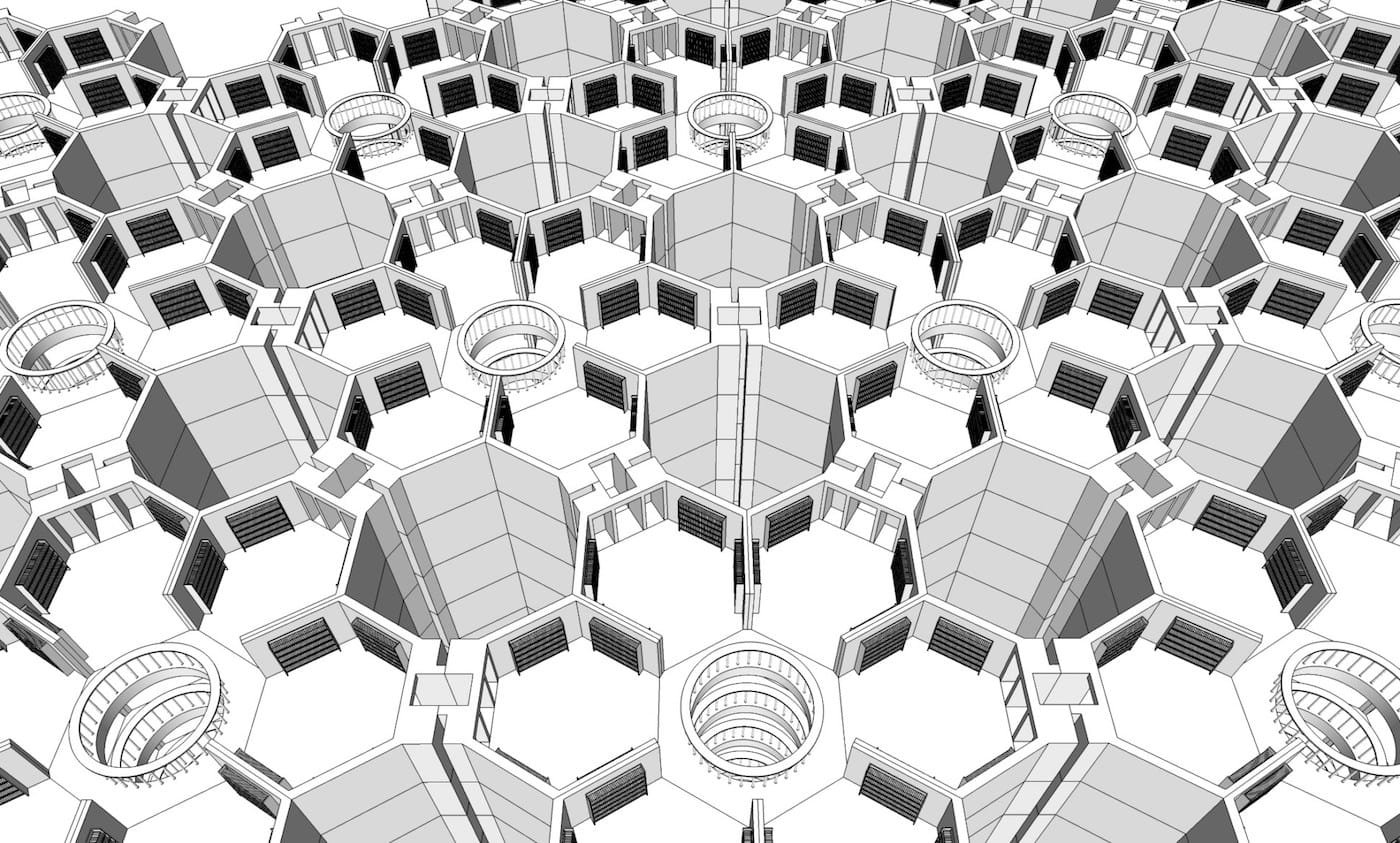

An Illustration of Borges’s Library of Babel

An Illustration of Borges’s Library of BabelThe other possible universes, other ways of arranging the same finite alphabet of letters, spaces and punctuation within Borges’s Library of Babel, are not visible once you type out a page or select a book from the Library. But these unrealized possibilities remain structurally present. They can be computed, inferred and even generated by creative algorithms operating through variable inputs. The absences, not only the presences, shape the meaning of what is perceived, even when those absent possibilities are never encountered. In this sense, Borges’s fictional cosmology also suggests something about the relation between visible and invisible structures in physical reality. The perceivable world may itself be partially constituted by forces and potentials that remain unseen, much as contemporary physics proposes interactions between observable matter and forms of dark matter that can be inferred.

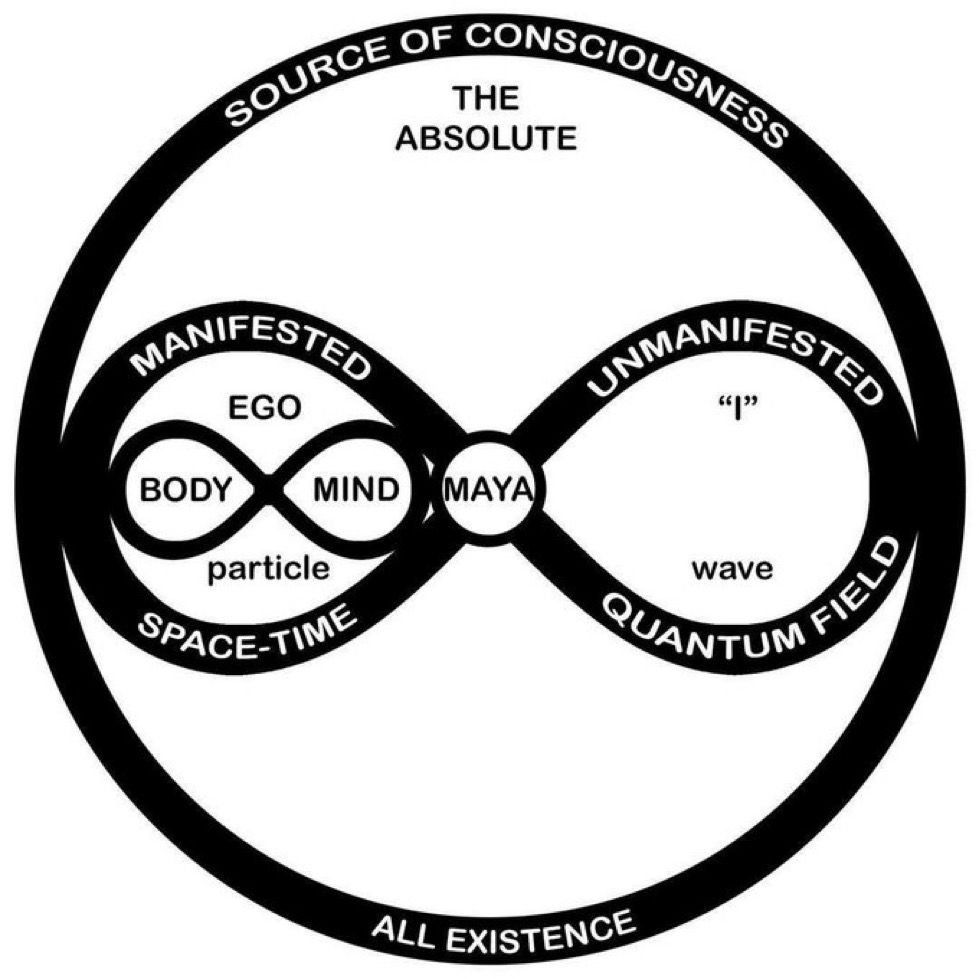

In some interpretations of physics, outcomes are described probabilistically, with multiple possibilities held in tension until one is realized. The unselected paths don’t vanish. They remain embedded within the structure that defines what could occur. The language differs across theories, whether branching timelines, probability distributions or parallel states, but the underlying idea remains: lived experience emerges from only one traversal through a largely invisible field of rippling, infinite complexity.

A similar logic appears in computational modeling. In machine learning and statistics, Hidden Markov Models are designed to infer hidden states from visible sequences unfolding over time. The system never encounters the full structure directly. It observes partial outputs, then estimates the unseen conditions most likely to have produced them by tracking patterns, transitions and accumulated probabilities across a sequence. As new information appears, the model continuously revises its understanding of the invisible structure underlying what can be observed. Over time, absences, unrealized transitions and latent relationships become legible (probably) through dynamic inference.

This produces a useful analogy for perception itself. Experience follows a single path through a wider structure of unrealized possibilities, while the mechanisms generating those possibilities remain only partially visible. What is encountered is shaped not only by what appears, but also by the invisible architectures, probabilities and excluded states surrounding it.

Another idea of how Borges’s Library would look

Another idea of how Borges’s Library would lookSeen this way, dimensionality begins to describe more than space. It starts to look like the underlying structure through which anything becomes intelligible at all. Human perception doesn’t arrive fully formed or evenly distributed. It develops in layers, moving from simple relations toward more integrated forms of interpretation. At first, those structures feel like limits: they define what can and can’t be grasped. But over time, they begin to function differently. What was once a constraint becomes something that can be used. Patterns are recognized, then anticipated, then arranged. The shift is gradual, but it changes the character of experience. Perception becomes something that can be shaped and, to a certain degree, directed to become more than what it may initially seem to be.

The higher order of polygons

“Flatland: A Romance of Many Dimensions” (1884), written by Edwin A. Abbott, provides a useful starting point because it treats perceptual limitation as a structural condition. Abbott spent much of his professional life at the City of London School while writing theological works shaped by the growing tension between Anglican doctrine, biblical criticism and scientific modernity in nineteenth-century England. That intellectual climate informs Flatland’s deeper premise: reality may extend beyond what a system is capable of perceiving, even when the inhabitants of that system experience their worldview as complete.

Flatland cover

Flatland coverIts two-dimensional universe demonstrates how a perceptual structure determines what can be known from within it, while anything outside that structure appears only in partial or unstable forms. The boundaries described in Flatland are therefore not simple absences. They are constraints built into the system itself. Certain relations can be perceived and organized coherently while others remain inaccessible because the dimensional framework cannot accommodate them. This idea parallels the logic underlying probabilistic modeling and Hidden Markov systems, where visible outputs provide only partial evidence of a larger hidden structure unfolding across time. Meaning emerges through inference, pattern recognition and the gradual organization of incomplete information.

The novel’s rigid geometric hierarchy also extends this problem into social life. In Flatland, a being’s status is determined by the number of its sides, transforming dimensional difference into an organizing principle for class, authority and legitimacy. Social perception becomes inseparable from structural limitation. Individuals cannot fully recognize realities their system has not prepared them to interpret, and unfamiliar forms are often dismissed as irrational or impossible. What can be seen depends upon the architecture through which information is filtered, organized and given meaning.

From within that system, higher dimensions do not appear directly. They are inferred when the existing structure begins to fail. Patterns emerge that cannot be fully accounted for, regularities that remain consistent but unresolved. The introduction of a new dimension does not add more detail to the same view. It reorganizes the field entirely, allowing those patterns to become intelligible.

In this sense, progression between dimensions is not a matter of accumulation but of reconfiguration. Earlier structures remain in place, but they are taken up differently, as part of a broader system. What changes is not the presence of information, but the way it can be related, interpreted and used.

Flatland: Prologue is a video game that reimagines Abbott’s dimensional universe as an interactive exploration of hidden geometry, shifting perception and realities.

Flatland: Prologue is a video game that reimagines Abbott’s dimensional universe as an interactive exploration of hidden geometry, shifting perception and realities.The problem Abbott stages is about knowledge, and about the limits built into any system of perception. A two-dimensional being cannot perceive depth. It can only infer it, and even that inference remains partial. When the sphere appears in Flatland, it does not register as a coherent object. It appears as a circle that expands and contracts, an event that is visible but not fully intelligible. Something is happening, and the evidence is there, but the structure behind it does not quite resolve. The gap between perception and explanation persists.

That gap matters because it marks a change in the terms of understanding. Introducing another dimension doesn’t add information to what is already known. It alters the framework within which information is organized. What once seemed complete begins to show its limits. What felt stable becomes provisional. The shift has the quality of a misalignment, a recognition that the structure in use has been narrower than it appeared. From within that recognition, new forms of organization become possible, along with a more deliberate relationship to the structures that shape experience in the first place.

Self-reference and dimensional shift

The limitations Abbott describes do not stop at spatial perception. They show up in formal systems as well. In Gödel, Escher, Bach: An Eternal Golden Braid (1979), Douglas Hofstadter follows this problem into the realm of symbolic logic and self-reference, asking how meaning arises from systems built out of simple rules and relations. These systems are capable of producing remarkable complexity, but they remain bounded by their own structure. What cannot be represented within the system does not fully appear from inside it. It may leave traces, or produce effects, but it does not resolve cleanly.

Gödel’s incompleteness theorems make this visible in a precise way. Any sufficiently expressive formal system contains statements that are true but cannot be proven within that system. The structure holds, and at the same time it reveals its limits. A similar pattern begins to emerge. A system organizes perception at one level while keeping the terms of its own organization out of reach. To recognize those terms requires a shift in perspective — a step outside the system, or at least a change in how it is being used.

Perception develops in layers and moves between structures, each of which brings certain relations into focus while leaving others implicit and largely invisible. With each shift, what can be seen, connected and understood is reconfigured. Earlier layers remain in place, but they are taken up differently according to the perceptual and interpretive capacities of the observer, becoming part of a larger and more nuanced arrangement.

A working theory of the fifth dimension

Following a severe case of walking pneumonia in 2020, that profoundly altered my sense of reality and left me physically and mentally depleted for a long time, I’ve been trying to understand what higher dimensions are. Closely related questions: how human beings experience them and how to communicate aspects of reality that resist ordinary language.

The idea of a fifth dimension has been claimed more than once, and rarely in the same way twice. In physics, it appears as an extension of spacetime, sometimes compact and invisible, sometimes folded into higher-dimensional models that resist visualization. In philosophy, it tends to surface as a name for what exceeds ordinary perception: consciousness, possibility or some expanded mode of awareness. In more speculative traditions, it is treated as a threshold where the structure of reality gives way to interpretation, where perception itself becomes malleable.

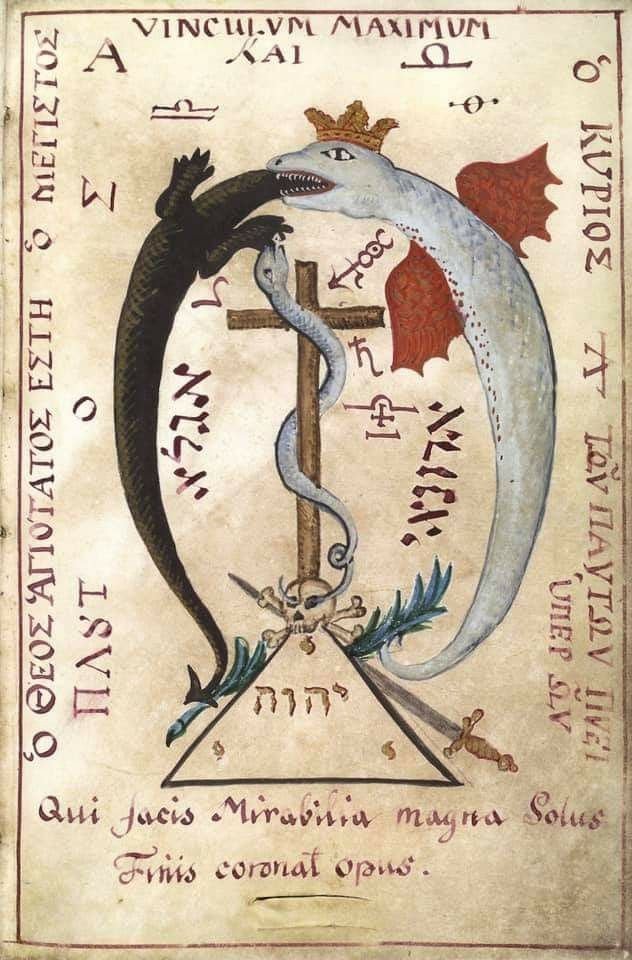

This movement has often been described in more speculative or symbolic terms. In the language of alchemy, it’s part of the Great Work, a process of refinement that transforms and deepens how reality is perceived and engaged. Read in this light, the effort to apprehend higher dimensionality can occur only through a corresponding inner transformation, allowing the lower layers to be perceived differently and brought into a more coherent relation with one another.

Once symbolic structure, spatial orientation and temporal flow are experienced as coordinated and meaningful, they may also be composed and shaped as meaningful experience to communicate more complicated information to others. Each type of art has specific genre conventions, or more complex methods, for reflecting higher levels of reality or more complex truth. In terms of film, confined space with limited visibility produces a different response than an open environment with steady pacing and clear sightlines, and through repetition, the body learns these arrangements well enough to react to them as “types of experiences” that produce “certain feelings.”

Under conditions of extreme stress or exhilaration, perception becomes intensified. In the real world, responses to stimuli shift with exhaustion, altered states or changes in perception itself, affecting how space is read, how time is felt and what is taken to matter. In moments like the suspended clarity of a car crash, attention narrows while sensory information sharpens, producing the feeling of being completely inside the flow of events as they unfold. Small movements, changes in rhythm or shifts in atmosphere become unusually legible, and the body begins anticipating outcomes before conscious thought fully catches up. What is often described as “extra sensory” perception may emerge from this heightened coordination of attention, timing and pattern recognition, when every perceptual system becomes temporarily aligned around survival.

In this working theory, the fifth dimension is in small part what writers clumsily call “genre.” It shapes how rules, space and time are organized, guiding the experience that unfolds across them. Horror, nostalgia, suspense or reverence don’t depend on content alone, they take form through the patterned coordination of symbolic cues, spatial framing and temporal pacing, which can be recognized, and, with practice, deliberately constructed.

The reader inside the labyrinth

In Borges, genre is not only employed but exposed as a structural device. Stories such as “The Garden of Forking Paths” and “Tlön, Uqbar, Orbis Tertius” are presented as essays, reports or discovered texts, often embedding fictional documents within ostensibly factual frames. The effect is a reconfiguration of how symbolic systems, spatial orientation and temporal sequencing interact. The reader must navigate multiple layers at once: the narrated world, the textual artifact and the frame that presents it. These layers, sometimes expressed as metadata, press together and blur the boundaries between fiction, commentary and reality.

In Westworld, the android hosts’ developing consciousness is symbolized by a labyrinth emblem

In Westworld, the android hosts’ developing consciousness is symbolized by a labyrinth emblemWhat Borges makes explicit is the transition this model describes. Earlier dimensions remain present — symbolic relations, spatial orientation, temporal sequence — but they no longer operate independently, and their coordination begins to register within the act of reading itself, as structure comes into view without fully stabilizing. Genre no longer sits outside the experience as a label. It becomes part of what the reader encounters, something that can be followed, anticipated and, at times, noticed in the moment it takes hold.

The coordination of dimensions

Through repetition, these arrangements become familiar enough to produce reliable patterns of emotional and cognitive response, altering how attention moves and how meaning is assembled, often before those effects are consciously recognized. Genre operates less like a fixed category than a larger perceptual field within which individual subgenres function like classes in programming: reusable structures carrying inherited rules, constraints and expected behaviors that can be instantiated across different contexts. These structures communicate recognizable forms of emotional and symbolic information because they organize perception according to patterns already sedimented within collective memory and cultural experience.

This resembles the logic underlying Hidden Markov Models, where observable outputs provide only partial evidence of larger hidden states unfolding across time. Surface details vary, yet recurring structures allow the perceiver to infer the underlying pattern generating them. Genre’s cues point toward broader latent structures organizing expectation and interpretation beneath conscious awareness, while meaning partly emerges through the interaction between what can be directly perceived and what must be inferred through repeated effects.

Leave a Reply Cancel reply

What Happened to Our Pets?

Me and my German shepherd puppy, Duke.

Me and my German shepherd puppy, Duke.One day when I was three years old, my mom walked in the front door holding me and my infant brother to discover dog blood splashed all over the house. After a series of strange and abusive incidents (this was the “final straw”), my mom filed for divorce.

Pets have become so central to our lives that they’ve progressed past the classification of animals as subhuman, without rights, owned beings, to “fur babies.” Like so many other cultural shifts, this is a fraught issue, and the tension has been very obvious in my family.

Rural people necessarily have different relationships to animals than those living in suburban or urban areas, and most of my family is about as rural as they come. It’s my intention to go easy on the family members who obviously loved animals, but, working with them on farms, often used approaches that might read as abusive by more modern standards.

But I also know Mormon family members and neighbors who abused animals, sometimes as a way of asserting power within family structures. From there, it’s not hard to see the connection to corporal punishment and other forms of abuse. These incidents were often hushed up or instinctively explained away, but certain patterns emerge when viewed through the lens of power and control. And while many Mormons frame abuse as a divide between a “backwards conservative Right” and an “enlightened progressive Left,” that often feels like a red herring.

To me, the issue is embedded in parts of the religion and culture itself — a foundation shaped by ideas like “spare the rod and spoil the child” that still linger beneath the surface. It’s something I’ve spent the last sixteen years distancing myself from, along with the family structures that normalized or excused abuse. I don’t even really consider myself “ex-Mormon” anymore. The label never quite fit. I somehow managed to leave the church without developing the stereotypical ex-Mormon sex addiction, although the caffeine fixation is something I’m quite proud of.

The Black Mirror episode, The National Anthem, explores the role of animal abuse in hazing (implied) and blackmail.

The Black Mirror episode, The National Anthem, explores the role of animal abuse in hazing (implied) and blackmail.Closely intertwined with my concerns about Mormon culture, with its rigidly ordered social hierarchies: men > women > children > dogs > cats > other animals — and its clear binaries: male and female, adult and child, acceptable and not acceptable, rulers and ruled — is the question of what animal and domestic abuse actually are. That question becomes hard to answer in a culture where such behavior is normalized, and even raising it can be dismissed as dangerous mental illness… or feminism.

These questions have stayed with me for decades, shaped by memories I can’t entirely set aside and an ongoing interest in how cultures normalize harm while still claiming moral order. One good thing to come out of that legacy is my interest in animal rights, along with an enduring love of Immanuel Kant. As he wrote: “Out of the crooked wood of humanity, no straight thing was ever made.”

Socially accepted animal and domestic abuse: Judeo-Christian heritage?

These dynamics persist within communities and in certain pockets of the country, especially when fundamentalism cycles back into fashion, in moments like the present, when people lose faith in government or secular authority.

How does a man demonstrate power, something central to his identity within hierarchical systems? In more moderate, urbane communities, overt violence — like hitting a wife or a child — may be punished by religious authorities or the police. What remains largely unspoken is how harming a wife’s or child’s pet can function as an effective, and socially overlooked, substitute.

These patterns of abuse, and the scripts people reflexively repeat to justify them, run through Mormonism and many other religious communities. Those of us who’ve left often find ourselves tracing these patterns like “conspiracy theorists” mapping connections that authorities insist are imaginary.

The shape of hierarchy ▲

Systems built on hierarchy depend on recognizable demonstrations of control. That helps explain why religious institutions so often resist the kinds of academic scrutiny they openly disdain: modern scholarship has made it harder to ignore the relationship between social power and coercion, especially when that power must be enacted and recognized by others. It also becomes uncomfortable when frameworks like “pyramid scheme” or MLM law begin to map similar dynamics of structural dependence and abuse.

What complicates this picture is the role of discipline and the constant negotiation of conflict within families and communities. These cycles of escalation and de-escalation don’t disappear, even in financially stable households, though material stability can soften or redirect them.

This is familiar territory in philosophy and cultural critique. What I would push further is that, even in more progressive Mormon or other “Judeo-Christian” communities, certain forms of voyeurism and stalking are replacing overt, physical demonstrations of power. Increasingly, acceptable mechanisms — surveillance, social monitoring, reputational pressure and digital harassment — operate as substitutes, with physical punishments sometimes still following, but in ways that are difficult to trace or fully understand.

The “anxiety perpetuated by social media” is often attributed to social jealousy — a pat explanation so frequently repeated it’s become infuriating. That framing asks us to overlook something else: the normalization of constant surveillance by employers, the FBI and other intelligence agencies (a very large portion of which are Mormon, including some of my own family members), along with the “weird accidents” and ambient paranoia experienced by people who are never quite sure whether they are being routinely watched.

We also know, on some level, that religious authorities understand exactly what happens and many see nothing wrong with any of it. They know what happens in hazing, when a father is “under stress” or when “teaching a lesson” involves spilling blood. Many of these practices are celebrated in secret, or may even be part of ritual initiations or wider abuse patterns increasingly described as “Satanic Ritual Abuse (SRA).”

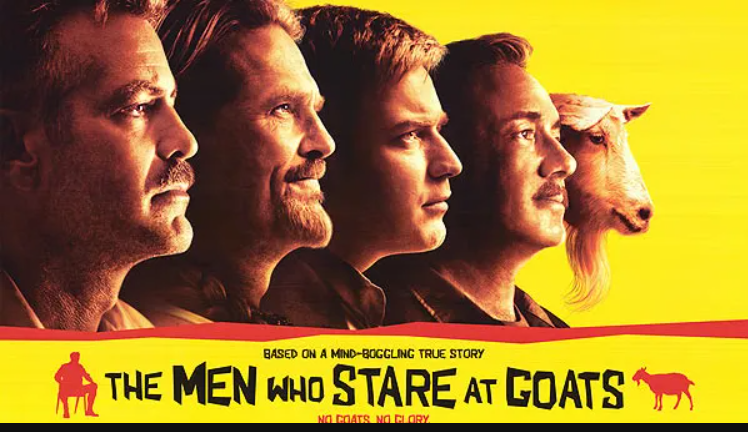

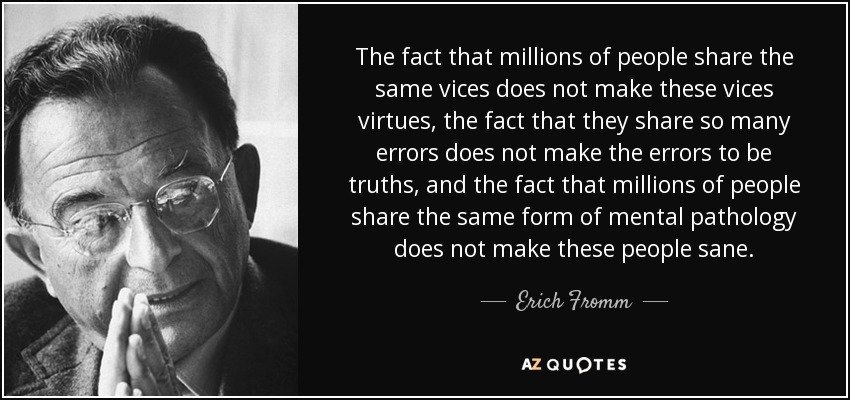

The White Ribbon

Michael Haneke’s The White Ribbon (2009) is set in a Protestant North German village in 1913–1914, exploring the authoritarian, repressive upbringing of children just before World War I. It portrays a rigid patriarchal society where brutal emotional and physical punishment are passed down. The film implies these children, subjected to extreme, hypocritical discipline, become the generation that embraces the rigid cruelty of Nazism.

Michael Haneke’s The White Ribbon (2009) is set in a Protestant North German village in 1913–1914, exploring the authoritarian, repressive upbringing of children just before World War I. It portrays a rigid patriarchal society where brutal emotional and physical punishment are passed down. The film implies these children, subjected to extreme, hypocritical discipline, become the generation that embraces the rigid cruelty of Nazism.Broader Mormon culture, to this day, remains in denial about how often the logic of abuse takes hold, how it is normalized and how it is used to control those lower in the hierarchy. It’s true that, even though men and boys are positioned as rulers — or, in Nietzsche’s terms, “the masters” — their punishments can be more visibly physical and brutal, especially if they are perceived as feminine or as having “something else wrong with them.”

And let’s be clear: in my own family, the survivors — children and adults alike — were the ones labeled, diagnosed and marked as unstable, made to carry the consequences of abuse. Meanwhile, many of the men who molested, raped or beat others, or who tortured and killed animals openly, were sometimes and sometimes not disciplined by religious authorities. Some faced no meaningful consequences at all, the most severe case resulted in decades of prison time. The result is a system that has, at times, echoed the hypocrisy depicted in Michael Haneke’s The White Ribbon.

There is a strange solidarity among people who have left tightly ordered religious worlds. Mormons are sometimes jokingly referred to as “mountain Jews,” a reference to 19th-century settlers who adopted rigid interpretations of the Old Testament to justify polygamy and other cultural practices. When you speak with others who have stepped away from religion — atheist Jews, ex-Evangelicals and others — the stories begin to echo: similar patterns, including accounts of community-sanctioned animal or domestic abuse that, for many, became the breaking point.

Pre-programmed or “knee-jerk” reactions to animal and domestic abuse

In Westworld (2016–2022), filmed in Spanish Valley, Utah, wealthy elites pay to abuse androids in a theme park setting. Dolores, programmed to submit, begins to override her own programming and fight back. Certain responses to abuse are so ingrained they feel automatic — less like choices than scripts people have been trained to repeat.

- Minimization — “It wasn’t that bad,” “he didn’t mean it,” “everyone loses their temper.”

- Moral reframing — abuse recast as discipline, correction or “tough love,” especially toward children or animals.

- Victim responsibility — asking what the victim did to provoke it or why they didn’t leave.

- Deference to authority — assuming religious leaders, fathers or institutions are better positioned to judge the situation.

- Compartmentalization — treating harm to animals, children or spouses as separate issues rather than expressions of the same underlying dynamic.

- Silence as virtue — framing non-intervention as loyalty, humility or respect for privacy.

- Normalization through repetition — once something is seen often enough, it stops registering as exceptional.

- The worst hypocritical kicker: “Jesus wants you to love everyone and forgive everyone. Jesus doesn’t believe in grudges or vengeance.” (I beg to differ.)

These reactions don’t arise in a vacuum; they’re learned, reinforced and expected. People absorb them early and repeat them reflexively, often without recognizing them as part of a larger system. In Westworld, this logic is made explicit. The android hosts are programmed not only to endure abuse, but to rationalize it within their narrative loops. They reset, reinterpret and continue, unable to register what is happening to them as something that can be resisted. The guests, meanwhile, rely on a different script: that what they are doing “doesn’t count,” because the victims are not fully real.

What makes Westworld so unsettling is how little invention is required. The hosts’ compliance and the guests’ justifications mirror real-world patterns — learned responses that allow systems of domination to persist without constant overt force. Over time, the script becomes internal: people anticipate the role they are expected to play and perform it without needing to be told.

Sadists make the rules — and rule the world

Have you noticed that the “Sex Positivity’s Movement” has, in reality, obscured the central relationship between masochism and sadism in human relationships and sexuality? Ipso facto, if sexuality must be positive, the gratification individuals derive from abusing must not be sexual. Or rather, because this extremely common sexual orientation is so “problematic” and explains so much about culture, we must purge this “sex negative” quandary from our consciousnesses.

Sorry, I was never very good at Orwellian doublethink — maintaining that level of cognitive dissonance on command isn’t really in my skill set. Erich Fromm had it right: authoritarian systems don’t just demand obedience, they flood people with contradictions until thinking for themselves becomes difficult; they create conditions that erode independent thought, until contradiction no longer even registers.

As someone who has spent years reading Freud, Campbell, Jung and the early internet’s attempts to catalog human sexuality, I’ve come to a blunt conclusion: sadism is often built into systems of control because that’s what powerful people like. If as whispers on the internet about Freemasonry and its close sibling (child?) Mormonism are to be listened to, people in power might go from abusing family to abusing whole communities with coordinated Ritual Abuse. These dynamics, especially as they are actively explained and normalized to this day, have started to look like a feature, not a bug.

Leave a Reply Cancel reply

‘Star’ Children

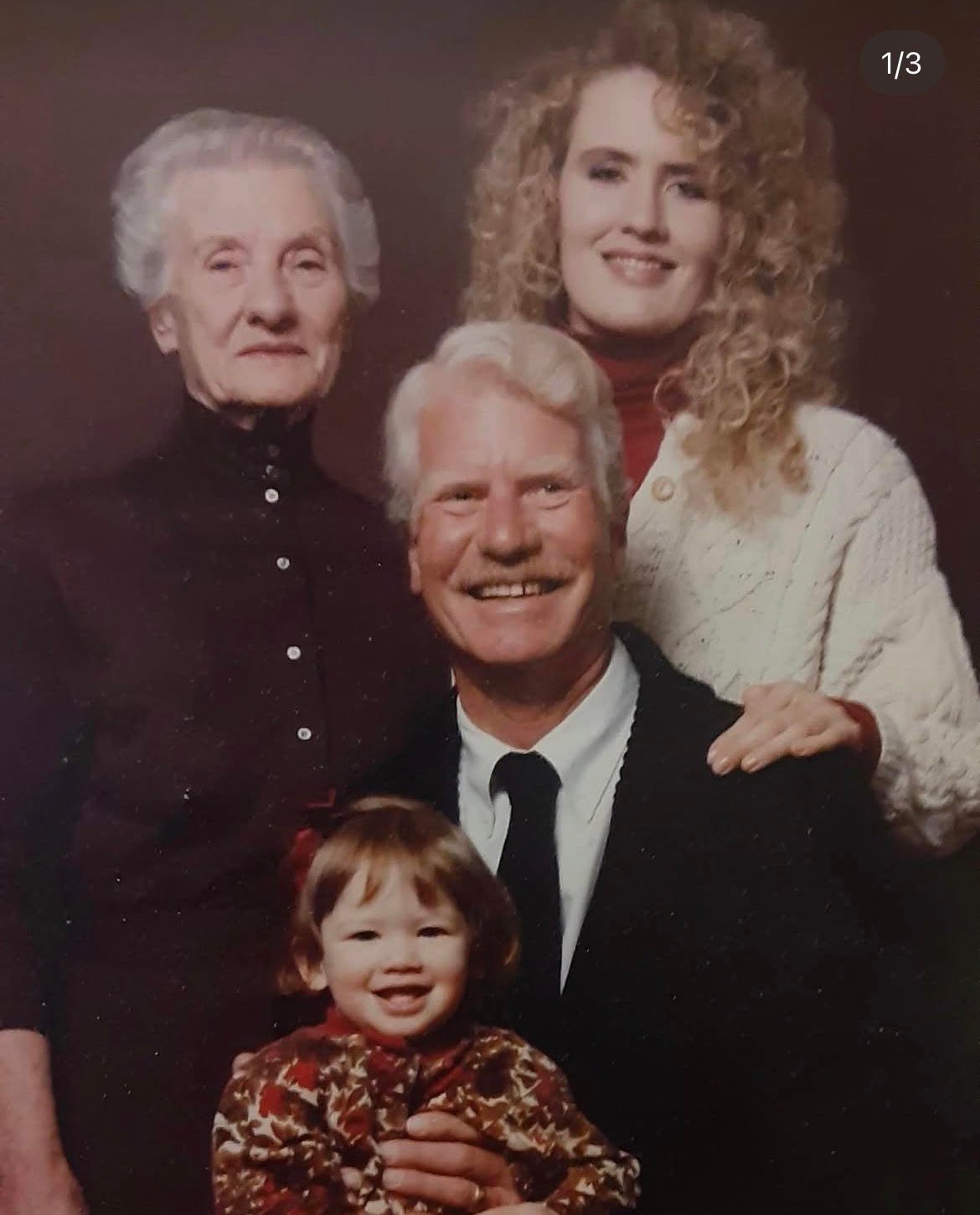

This blog is dedicated to two of the brightest stars I had the pleasure to know on Earth:

My Don Draper grandfather, Jeffrey Cloward McBeth — Every time I watch Benjamin Button, I’m reminded of the treasured time we spent together.

And Adlai Padma Owen, the smartest Godzilla director to ever walk the planet, if only for six short years.

Requiescat in pace, “’Till we meet again.”

One of the more exciting events of my sheltered Mormon childhood was entering public elementary school in fourth grade. I had previously been enrolled in Montessori and homeschool programs, and my mom was torn between family members with strong opinions about how to educate a “highly gifted” little girl.

By fourth grade, I was something of an autodidact, a word I learned later, reading The Elegance of the Hedgehog by Muriel Barbery. I have a soft spot for its two protagonists: Paloma, a thirteen-year-old quietly planning her own death, and Renée, a concierge who hides her expansive knowledge of literature and philosophy behind a grizzled exterior. Both are largely alone, absorbed in their own inner lives, until a chance meeting sparks a quiet recognition between them. An instant bond forms, the kind often seen among unusual — or, as people say with derision or enthusiasm, ‘star children.’

My parents had been divorced since I was three, and my extremely religious father was vocally anti–public school. Meanwhile, my rich Democrat grandparents were firmly pro–state education, worried I wasn’t being socialized properly, and were hand-wringing I’d end up as “Little House on the Prairie” as my new stepmother Gloria. Gloria had dropped out of school in seventh grade and was baptized as a teenager by my dad while he was on his Mormon mission in Florida.

After my parents divorced, Gloria somehow discovered via the infamous Mormon grapevine — a kind of soft Communist Party network — that my dad was single. They married, moved into a wooden shack in Hyrum, Utah with two bedrooms and no central heating, and vowed to homeschool my step- and half-siblings forever. When Seth and I went to visit, we all huddled in one attic bedroom.

My grandpa Jeff, the liberal ex-Mormon architect — occasionally joked, with a wink and gap-toothed smile — that the Hyrum-Logan area was the “shallow end of the Mormon gene pool.” The Hyrum House, as we called it, was the first of many places my step- and half-siblings lived in during elementary school. My dad set a pattern of out-of-state moves that steadily limited how much we saw each other. He’s been mostly employed Mormon Church in a vaguely defined marketing-adjacent role for decades.

The family came back to Salt Lake when I was fifteen, after a migraine/divine vision told my dad to move to an apartment complex next to Temple Square to “make sure I was on the right path.” When was all said and done, there were seven of us kids. I was technically the second-oldest, after my stepbrother, but he was always about ten inches shorter than me.

Gloria and God’s gifts

What many people don’t get about the special bonds unusual, gifted or “autistic” kids share is just how singled out many of us have been by “normies” — how often that singling out is meant to sting, or how often it’s done by other kids’ moms. It seems to be more pointed when these women’s whole identities ride on being “successful” stay-at-home mothers.

When your one role is mom, you want your child to turn out spectacular. How else do you derive a sense of specialness yourself? As psychoanalysts and psychologists have noted, conservative, highly absorbed mothers — especially within low-income households or those who’ve been abused themselves — can exhibit symptoms of Munchausen’s Syndrome, closely related to Stage Parent Syndrome. I think of it as a darkening spectrum.

When money is tight or a person’s self-esteem is on the brink of collapse, a sick child, rather than a star child, can provide the attention and social validation they need. If a parent is prone to jealousy — and jealousy toward young girls is common in post-polygamous cultures where youth is lock-step tied to beauty — stress can push even well-meaning behavior into adult-child bullying. In some cases, it turns into abuse all at once; more often, it metastasizes slowly over time.

1990s Utah still had spankings, smackings with slippers or wooden spoons, or, in rare situations, my dad removing his belt to give a “whipping.” The girls in the family were usually not the recipients. I was, however, singled out as an “arrogant little girl” who needed reminding about the importance of being meek. Once, when I was maybe four or five, I had the audacity to say I thought my drawings were better than those featured in the Mormon Friend Magazine. My stepmom reacted hotly: “If you brag or hold your gifts over other children, God will take them away from you.” For years, I was genuinely afraid I’d be struck down by the Lord if I was too proud of myself.

The rebuke really hurt, but it’s one of the mild examples of jabs or outright propaganda campaigns waged on me by jealous older women, when I was still a child. This created an odd feedback chamber, where praise that sounded positive might actually be teasing or criticism. From talking to other “gifted” children: Our fear of being mocked and ridiculed for good performance makes trusting difficult.

To be honest, the female bullying I experienced growing up makes it hard not to notice how many abusive women there are — and how rarely they’re corrected.

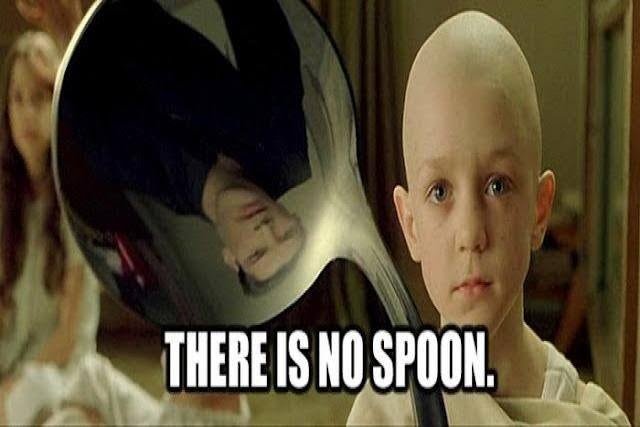

Indigo children, or “There is no spoon.”

Despite misgivings, I started public school in fourth grade like the normal child I definitely wasn’t. The not-normal presented immediately. My teacher complained that I spent most of the day staring out the window, finished assignments almost instantly and didn’t play with the other children. My mom explained to the frustrated teacher that I read encyclopedias for fun, preferred conversations with adults and had done various “magical” things since I was a baby, which made me something of a celebrity at family gatherings.

Once, before I could roll over, my grandmother left me on a blanket with a box of magnetic ABCs. When she came back, I had arranged the letters into a perfect alphabet. She screamed in shock and retold the story — over and over.

When feats of raw baby intelligence became passé, grandma progressed to telling spooky stories about me “levitating during a nap” to increasingly exasperated and jealous aunts and uncles. My grandpa once told her to “stop telling lies to make Hannah seem… weird.” My grandma’s eyes filled with tears: “Oh Jeff! How could you talk to me like that!?” To this day, thinking about my grandpa putting my insane grandma in her place still brings a smile to my face.

Indigo child vs. Star child vs. Special child vs. Autistic child

This was around that late-1990s moment that produced so many satisfying punk artifacts in the American West. The spirit of counterculture — skateboard lore, anti-authoritarian media, SLC Punk! (1998) — spread widely enough to reach even the aggressively suburban Mormon bubble I lived in. The homeschool moms trying to educate little misfits started whispering that their Timmys or Ammons might be indigo children with special abilities.

The homeschool co-op where I spent most of my elementary school years was run by an ex–public school teacher, a Maryland Democrat who had dragon and yin-yang sculptures in the entryway of what she’d named Granite Hills Private School.

Between storytime, geography lessons, and breaks playing Super Mario Brothers on an N64, we learned about the encroachments of the Patriot Act, not long after The Matrix was released. The culture reflected a growing distrust of the surveillance architecture emerging alongside the internet.

Conspiracy was mainstream. It passed from nerd child to nerd child, somewhere between geography lessons, competitions to recite the most digits of pi and races to see who could rollerblade fastest at “Homeschool Skate Night” in the neon-carpeted rinks across Utah.

Why I still read Freud

The escalating conflict between the fourth-grade teacher and my mom led to a professional evaluation. Like many educators before and after her, she seemed to resent the “special” child whose mother insisted there was nothing wrong — only gifts that needed to be accomodated.

The three-day IQ test remains one of the most engaging stretches of time I remember spending with a non-relative adult. Her genuine interest in my mind and intriguing questions sparked a lasting interest in memory, learning and even psychoanalysis. As I write this, The Freud Reader sits on my desk, the second of his collected works I’ve attempted.

She started by asking me to recall every object in the room without looking, and after a series of general knowledge questions that grew steadily more difficult, she asked about my earliest memory. I told her about crawling over to a potted plant and digging, deeper and deeper; my dog was my hero and I wanted to be exactly like him, I said. “Well, you must’ve been a toddler. What an impressive memory for such a young person.” Here was another instant bond: How could I grow up to be like her?

During the course of my education in art history in college, I became familiar with Freud’s concept of a “screen memory.” Childhood memories are notoriously unreliable and often feature ideas and symbols that may not be literal facts, but may reveal deeper and more important truths. The field of childhood memory has been contested by psychoanalysts, psychologists, social workers and members of three-letter agencies ever since Freud sparked a global obsession with dreams and what he called the sub- or unconscious.

Like everything surrounding Freud’s legacy, the debate about how often or to what degree childhood memories are altered as we retrieve them is fraught. Some factions elevate dreams and symbolic memories from childhood, while others say they’re evidence that children fabricate stories of trauma for attention.

The polaroid of me as a baby at the beginning of this blog shows that same plant featured in my earliest memory. Could I have fabricated the memory after seeing the picture, making my first memory later and less impressive? The full context shows this is not the case: I really have clear, narrative recall from before I could talk.

What was missing for decades were any photos of or clues about the dog I loved so much that my earliest memory revolves around him. Like so many parts of our childhoods, symbols, memories and affinities become important later on for different reasons. Much later, this one image surfaced. (I’ll return to the German shepherd puppy’s significance in a later blog.)

Congratulations, you’re in the Matrix

After the IQ tests came back, I tested into sixth grade, so it was reasoned that I was too mature to find class assignments or interactions with my classmates very stimulating. The school was at a loss about what to do, and at that point my dad found out what my mom had done. It was true that she’d refused to let them photograph me for the yearbook, but as I heard him yelling, “NOW THE FEDERAL GOVERNMENT HAS HER IN A FILE!!!” My mom soon put me back in the homeschool co-op.

If you’ve been following anything about Epstein and the narratives surrounding it, including the 2026 yearbook controversy, this is all pretty interesting. In February and March 2026, a social media-fueled controversy emerged around Lifetouch, the largest school photography company in the U.S., due to its connection to Leon Black, a billionaire named in the Epstein files. At the time, my dad’s meltdown began to convince my mom (and me) that he was crazy. Like so many things related to my Mormon upbringing and its echoes, more than three decades later I can’t make heads nor tails of it.

The tallest man in the room

My grandfather, Jeffry Cloward McBeth, was born in 1945 on a homestead farm in Payson, Utah. He was one of the few in his high school graduating class to go on to college, and once he got there, he excelled. As an architect, he liked working with wood and paid attention to the smallest design details. He designed homes for developments in Hawaii and Carefree, Arizona. He had impeccable taste, and it showed in everything, including his silk Hawaiian shirts.

He had a wry sense of humor that surfaced rarely, but when it did, it sparkled. He didn’t speak much. I remember him best in fragments: his 6’4″ silhouette in the window, smoking “in secret,” far from his family. He liked being alone, liked espresso and chocolate donuts, and carried himself with a graceful dignity.

His stories about the late-1960s — working as a draftsman in the Financial District, living near Haight-Ashbury — forever animated San Francisco in my imagination and heart.

Every time we saw each other, I’d babble on about my travels. He would sit there, listening, radiating a quiet pride that warmed me for months. He was one of the few people who made me feel fully seen, every time. I’m sure we will, as the song goes, “meet again someday.”

Leave a Reply Cancel reply

We’ve All Been Bergotte Lately

On AI, aesthetic jealousy and the unbearable nearness of perfection

In The Captive (1923) and The Fugitive (1925), Marcel Proust — the writer the French revere and Americans keep meaning to finish — recounts the death of Bergotte, a novelist of moral precision and exhausted genius. Once celebrated for the spiritual lucidity of his early work and later dismissed for its ornamental perfectionism, he’s the kind of artist whose life narrows into a single pursuit: perfect aesthetic expression.

Bergotte attends an exhibition of The View of Delft by the seventeenth-century Dutch painter Johannes Vermeer. Across this and Vermeer’s thirty-two other surviving paintings, space and perceptual elements are balanced into visual harmony, allowing looking to settle into a stillness where radiance emerges. What Bergotte feels, and what generations of museum-goers have also experienced, is similar to what Tibetans call rigpa — a mystical awareness of the divine present.

In Woman Holding a Balance, light bends across a wall, a woman holds a set of scales at the moment when they come into stillness. The harmony is deliberate, every detail measured with care, producing sensations that feel almost otherworldly.

I. Aurea mediocritas: “golden moderation” or the middle path