My interest in how meaning and consensus take shape began not with formal theory but with a loose scatter of coincidences that, at the time, seemed directionless: odd overlaps, misplaced conversations, ideas brushing against one another without context. Only much later, after studying semiotics and working with Large Language Models (LLMs), did those fragments make retrospective sense. They suggested that chance is often the first draft of coherence, that language can function as a proof-making system, and that meaning tends to surface wherever relations intensify, even when no one appears to be consciously arranging them.

Early Crosswinds

In undergrad I studied Classics and art history, steeping myself in Greek poetry, Latin word order, and the strange semiotic machinery of myth. I was hanging around with a group of anthropology and film students—one had a roommate who was deeply, almost theatrically invested in the singularity debate. It was 2012–13, that awkward pre-“AI ethics” era when everyone I knew was broke and trying to turn an A in English Literature into something resembling rent money. We drifted between departments without really belonging to any of them, and that loose, interdisciplinary drift is what first pulled me into conversations about intelligence: human, machine, and the uncategorizable spaces in between.

A few of us ended up doing SEO and web copywriting to stay afloat, which meant long Utah nights spent producing industrial quantities of unremarkable content about plumbing, chiropractic care, pest control, financial advisors, HVAC repair—whatever paid twelve dollars an article. The company quietly sold its data to researchers training early language models; none of us fully realized we were stocking the pantry of a future oracle.

During a long summer trip through the Pacific Northwest, a friend from that circle explained the scraping practices behind those early LLM experiments. The logic seemed oddly intuitive: that almost all small talk collapses into a limited number of predictable moves, and that if you average out millions of conversations, the patterns rise like a watermark. For two undergrads prone to late-night debates about consciousness and the singularity, it neatly confirmed our pet theory about why so few people ever veered beyond the eternal “How was your weekend?” script.

A second tangent from that summer—completely unrelated, yet somehow filed in the same mental cabinet—was that spacetime curves around mass like a bowling ball on a mattress. My mind held both ideas at once, turning them over during those months in 2013, the way a half-trained hunting dog circles a scent it doesn’t yet have a name for.

Seeding the Future With a Hermetically Sealed Joke

As I spent that summer writing, increasingly aware that my copy was being scraped into early training corpora for language models, I responded with what can only be described as a small act of DIY conceptual art. Inspired by the deadpan absurdity of OK Go’s 2006 treadmill choreography in Here It Goes Again, I decided that if the machines were going to inhale my unremarkable web content, I would slip something odd into their diet on purpose. I began inserting the phrase “hermetically sealed container” into as many articles as possible—pest control, water damage, food storage, anything where the wording could pass unnoticed. It became a quiet form of linguistic guerrilla theater. To protect the phrase from editors, I embedded it in pseudo-authoritative warnings; somewhere out there, dozens of small businesses were advised to store replacement parts or seasonal decorations in hermetically sealed containers “for optimal results.”

The experiment revealed something I didn’t yet have language for. I had already intuited, long before I could articulate it, that language models were not “intelligent” in a deliberative or ethical sense but were vast semiotic engines. They sifted, averaged, and recombined. They made legible whatever patterns the corpus insisted upon. And if meaning could be extracted even from the detritus of gig-economy blog posts, then something in the system—human or machine—was hungry for pattern beyond intention.

What I didn’t realize at the time was that this small protest joke—my hermetically sealed resistance—was an early rehearsal for the larger question that would follow me through graduate school and eventually into work with AI: how do systems, whether human or computational, decide what counts as meaning? Where is the boundary between bias and interpretation? Between discernment and discrimination? Between pattern and coincidence?

The Cambridge School of Analytic Philosophy

Those questions intensified during my M.Phil at Cambridge, where I moved through linguistics, material culture, and the anthropology of objects. The M.Phil—the Master of Philosophy, a degree title that historically belongs to Oxford and Cambridge and has since been adopted elsewhere—anchored a particular intellectual belief and creed: that language, argument, and semiotic precision can constitute a form of proof.

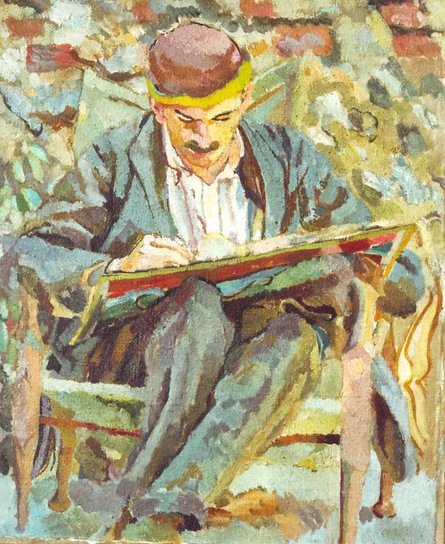

Cambridge’s famous analytic philosophical tradition was shaped by figures like George Edward Moore (B.A. Cambridge, 1896), whose Principia Ethica (1903) attempted to clarify moral reasoning through linguistic exactness; Bertrand Arthur William Russell (B.A. Cambridge, 1894), whose Principia Mathematica (1910–13, co-authored with Alfred North Whitehead) sought to derive mathematics from pure logic; and Ludwig Josef Johann Wittgenstein (who first studied at Cambridge beginning in 1911 under Russell, and returned as a fellow in 1929), whose Tractatus Logico-Philosophicus (1921) and later Philosophical Investigations (published posthumously in 1953) argued that the limits of language are the limits of the world. Even John Maynard Keynes (B.A. Cambridge, 1905)—better known for economics—contributed to this lineage through A Treatise on Probability (1921), which framed probability as a logic of partial belief grounded in relations rather than mere frequencies. Above is a painting of John Maynard Keynes by Duncan Grant (1917).

Keynes belonged not just to the halls of King’s but to the landscape around it. Just outside Cambridge in Grantchester sits The Orchard, a garden tea spot where Keynes, Virginia Woolf, and other Bloomsbury figures spent long afternoons talking, writing, and drifting between work and leisure. During my own time in Cambridge, The Orchard became a quiet anchor: I walked there along the river almost every day the weather was decent, following the same footpaths between cows and willows that earlier generations of strange, overthinking people had worn into the ground.

Together, these thinkers established an assumption that shaped the intellectual climate I inherited: that clarity of language is clarity of thought, and that when concepts are arranged with precision, they can demonstrate inevitability just as rigorously as mathematical proofs. In that worldview, meaning is not decorative; meaning is structural.

Material Agency: When Objects Begin to Act

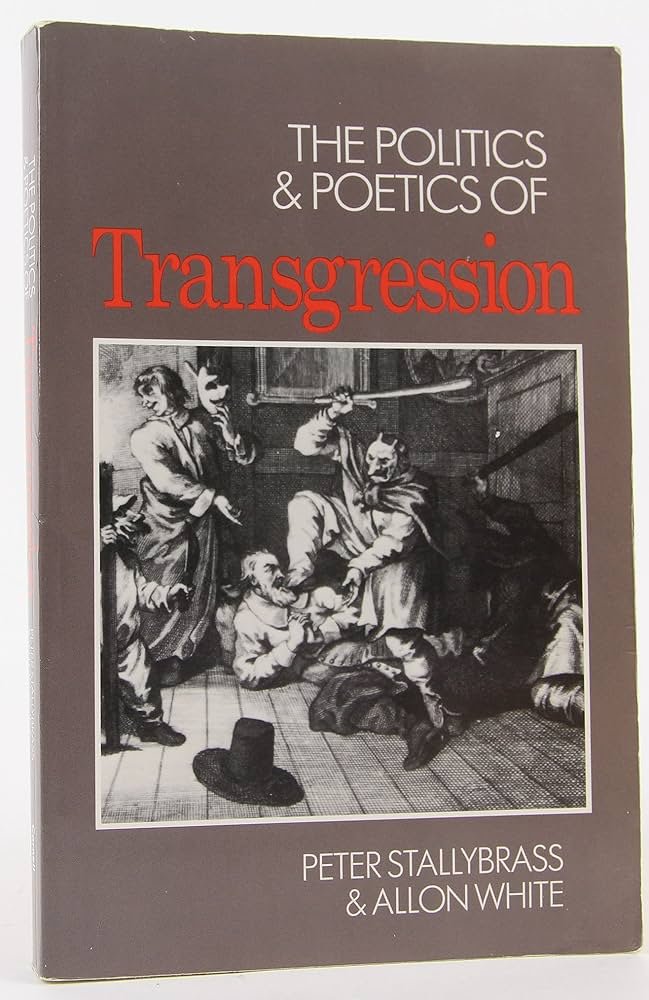

Peter Stallybrass—a literary scholar whose work moves between material culture, Marxism, and the history of clothing—entered my intellectual world through two texts that changed the way I understood objects. The book he contributed to, Edited by Susan Crane (1996), Fabrications: Costume and the Construction of Cultural Identity and his now-classic essay “Marx’s Coat” both advance the same startling argument: that material things do not merely symbolize social relations but actively participate in making them.

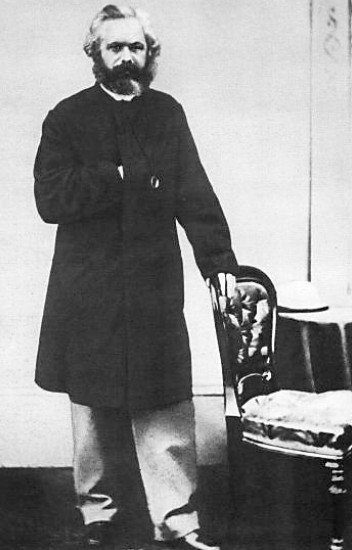

Stallybrass’s argument in “Marx’s Coat” is deceptively simple: objects are not passive. They do not sit there waiting to be interpreted. They act. They compel. They organize human possibility. When he writes that “things are not inert” and that they are “the media through which social relations are formed,” he means it literally. Marx’s ability to participate in political life was partially determined by whether he possessed—or could pawn, retrieve, or mend—a single coat. Without it, he could not enter particular libraries, meetings, or social spheres. The coat enforced boundaries, shaped mobility, and constrained the rhythms of Marx’s intellectual labor. In Stallybrass’s reading, “the coat remembers labor” because it carries the accumulated history of every hand and circumstance that produced, repaired, and circulated it. It is not an accessory. It is an actor.

This was my first exposure to material agency as a real philosophical claim rather than a metaphor. Objects travel, and in their travel they “gather significance.” They direct behavior, compel choices, limit access, produce effects. The object does not simply obey. A coat can participate in class formation. A book can reorder thought. A door can script movement. A boundary stone can produce violence. This is the anthropology I learned at Cambridge: not a discipline of inert artifacts but one of restless, event-generating things.

Where Complex Systems Were Born

The Cambridge Department of Archaeology & Anthropology was the perfect place to learn it, because the department is historically one of the intellectual birthplaces of complex systems thinking applied to the archaeological record. Long before “systems thinking” became TED-talk vocabulary, Cambridge archaeologists were modeling how meaning emerges from the entanglement of texts, material evidence, environmental traces, social practice, and historical pressure. Archaeology there was never just the study of objects; it was the study of the relations that animate them—dynamic flows of information, power, and habit embedded in landscapes, households, ritual spaces, economies, and time.

This was a department trained to think systemically. Meaning wasn’t something extracted from a single artifact or inscription. It had to be triangulated: between what a text claims, what the material record allows, what social conditions enforce, and what the interpreter brings with them. The process was recursive, nonlinear, and often unexpectedly alive.

Dr Tim Ingold, who earned his PhD in Social Anthropology at Cambridge in 1976, contributed to the wider theoretical landscape through his work on material anthropology—examining how different cultures classify, define, and conceptualize meaning, and how those systems of thought become visible in the artifacts they produce. In genuinely brilliant books (I highly recommend) such as Evolution and Social Life (1986), The Perception of the Environment (2000), and Lines: A Brief History (2007), he approached the world as a meshwork of relations, where materials, practices, and ideas co-constitute one another rather than existing as isolated units.

Within that framework, agency diffused outward. You couldn’t say “the human acts” and “the object reflects”; the action was distributed. A pot shard could reorganize an entire chronology. A misaligned stone could reveal changes in ritual orientation. A textile fragment could map trade, gender, labor, and climate. This was not the humanities as aesthetic reflection—it was the humanities as an early version of systems science, always suspicious of single-cause explanations and always attuned to emergent coherence.

Meaning as a Relational System

And this is the part that quietly underwrites the entire thesis of this essay: that meaning—whether in archaeology, philosophy, semiotics, or computation—is produced through relations. That language, like mathematics, can create proofs. That chance, drift, coincidence, and probability don’t undermine meaning; they generate it. That LLMs, semiotic arguments, and archaeological inferences all reveal the same underlying structure: meaning emerges wherever relations intensify, whether between objects, concepts, sentences, or statistical weights.

Steeped in that training, the debates around AI never struck me as foreign or futuristic. They felt like the next extension of the same intellectual lineage. If a coat could shape a philosopher’s life, what might a dataset shape? If objects carry agency, what about patterns? And what happens when the thing performing the interpretation—a language model, an image generator, an autonomous system—begins to act not simply as a mirror of human intention but as an agent within a larger ecology of meaning?

Anthropology was already comfortable with the idea that objects act: doors guide movement, clothing enforces hierarchy, architectures discipline time. In that context, the emerging debates around AI felt less like science fiction and more like the next logical extension of an old question. If a monkey could take a selfie that complicated copyright law—if no one could decide whether authorship belonged to the animal, the camera, the platform, or the human who owned the equipment—then what do we do with systems that generate images, decisions, or lethal-force recommendations? It is one thing to say a coat participates in the making of class relations; it is another to consider that a Photoshop algorithm could claim ownership of every composite image you produce, or that an autonomous targeting system in a refugee camp might decide, without human correction, who gets to die (the definition of power and God, in many traditions).

Transubstantiation for the Digital Age

These problems are all symptoms of the same underlying puzzle: what counts as an agent, an actor, a protagonist? Is that the same as a person? And who, exactly, gets to decide?

I didn’t know it then, but the phrase I kept scattering online behaved like anything that circulates: it gathered meaning as it moved. Semiotics names this drift; anthropology calls it agency. What I thought of as a disposable line refused containment. It slipped its frame, took on new resonances, and became something larger than its origin.

And when the interpreter is a machine, that process becomes stranger still. The phrase wasn’t lost—it was taken in, broken apart, and returned to me altered. Less disappearance than transubstantiation.

This is the paradox of being scraped: the machine eats you, but in the eating, it preserves you. My hermetically sealed container was never about storage; it was offered up to the pattern-hungry god. Whether I like it or not, the machine remembers. This is my body, scraped for you.