When my brother gave me a Raspberry Pi one Christmas around 2010 — a palm-sized computer meant to teach beginners how to code—I’d been studying Greek and Latin for several years at the University of Utah. By that point, I was deep into intermediate courses in the Department of Languages & Literature that ended up reorganizing how I thought.

I was lucky to have professors whose passion for ancient languages shaped me—Professor Randy Stewart, Margaret Toscano, and Jim Svensen among them—each offering a different way of thinking through a text, a question, or a problem.

Those years were quietly training my mind to think in structures—patterns, contrasts, paired ideas. So when I finally opened the Raspberry Pi tutorials later that winter, the logic didn’t feel new at all. It felt like something I had already learned in another language.

The Old Logic Behind New Machines

When I sat down over winter break and started the tutorials, what stood out immediately was the clarity of the structure. The if/then statements and small branching choices that guide a program forward followed the same logical architecture I had been working through in Greek. The μέν / δέ construction—literally “on the one hand / on the other”—sets up a two-part contrast that divides an idea into paired alternatives. Aristotle uses this same structure when he lists the basic contraries of nature, “τὰ ἐναντία, οἷον θερμὸν καὶ ψυχρόν” (“the contraries, such as hot and cold,” Categories 11b15). In its simplest form, it is a binary: a choice between two structured possibilities.

The same pattern appears in conditional moods like εἰ with the optative or ὅταν with the subjunctive, which sketch out hypothetical paths depending on whether a condition is or is not fulfilled. Basic programming follows the same logic—not metaphorically, but mechanically—moving forward only through a chain of divided possibilities.

Greek philosophy forms the underlying structure of what later becomes formal logic, and formal logic becomes the foundation of every programming language. Aristotle writes in the Organon that “τὸ δὲ ἀληθές καὶ ψεῦδος ἐν τῇ συνθέσει καὶ διαιρέσει ἐστίν,” meaning that truth and falsity arise from how things are combined or separated (De Interpretatione 16a10–12). A statement is true or not true. A branch is taken or not taken. Binary computation inherits this exact principle: a system advances only by dividing itself into twos.

That same twofold pattern—opposing yet coordinated pairs—shapes more than syntax or algorithms. It echoes through our bodies, our senses, and our movement. Once you begin looking for twoness, it becomes difficult to ignore how deeply it structures the world.

Heraclitus and the Unity of Opposites

- Unity of opposites: For Heraclitus, what we call “opposites” are inseparable partners. Day implies night, heat implies cold, and each gains meaning only through the contrast with its counterpart.

- Mutual dependence: Opposing states are not truly independent; they arise together. A shadow needs light to exist. Neither element stands alone without the other defining it.

- Cosmic tension: Heraclitus saw conflict as the driving force of the world. His line “War is father of all” suggests that struggle is not destructive but generative — the tension that keeps reality moving.

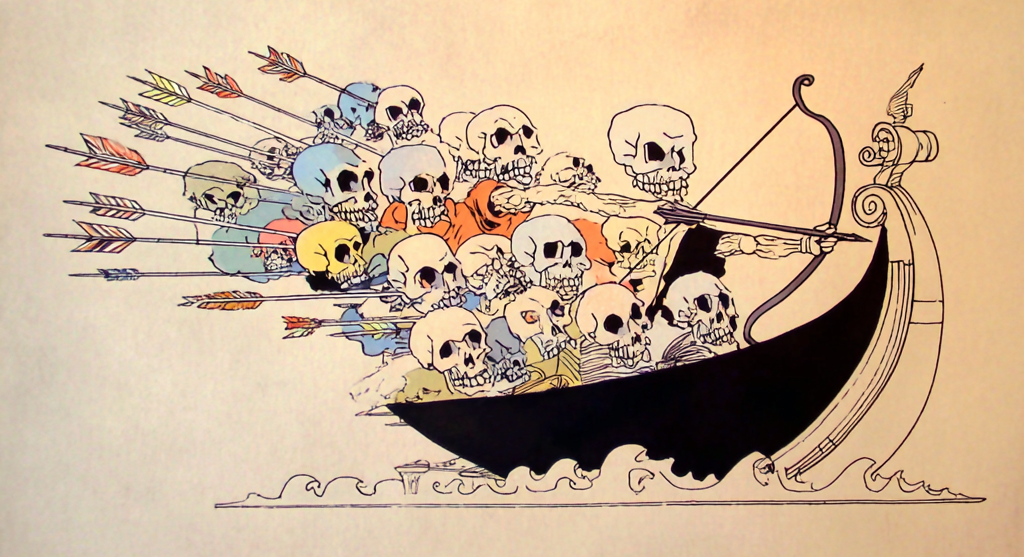

- Harmony from strain: Balance emerges through opposition. He compared this to a bow or a lyre, where beauty and function come from forces pulling in opposite directions. A single object can hold contradictory qualities, as when he said a bow’s name “is life, but its work is death.”

- The logos: Underneath all change is the logos — a rational, ordering principle that holds opposites together. For Heraclitus, the world’s constant flux isn’t chaos but the expression of a deeper coherence.

- Perspective and flux: What look like strict oppositions are, from a broader perspective, variations of the same underlying reality. Everything is in motion, and opposites are simply different phases of that movement.

Heraclitus wrote these ideas not as abstractions but in sharply compressed, poetic fragments that still read like koans. Two of the most famous capture the tension at the heart of his philosophy:

Heraclitus: Original Greek Fragments

Fragment DK B53

πόλεμος πάντων μὲν πατήρ ἐστι, πάντων δὲ βασιλεύς,

καὶ τοὺς μὲν θεοὺς ἔδειξε τοὺς δὲ ἀνθρώπους,

τοὺς μὲν δούλους ἐποίησε τοὺς δὲ ἐλευθέρους.

“War is the father of all and king of all; it reveals some as gods and others as humans;

it makes some slaves and others free.”

Fragment DK B48

τοῦ τόξου ὄνομα βίος, ἔργον δὲ θάνατος.

“The name of the bow is life, but its work is death.”

That same binary skeleton—true/false, hot/cold, on/off—turns out to be more than a linguistic habit. It is built into how our bodies are assembled and how we move through the world.

The Number Two (Body, Symmetry, Anthropology)

Human bodies are built on bilateral symmetry: two eyes, two ears, two nostrils, two hands, two lungs, two sides of the brain, and two chambers on each side of the heart. Even our upright posture depends on the coordinated tension of paired muscle groups pulling against and with each other. Anthropology doesn’t treat this twoness as decorative; it sees it as the direct inheritance of the moment early hominids shifted from moving on four limbs to balancing on two. Bipedalism is the hinge that changed everything: the way we balance, the way we allocate energy, the way childbirth works, the risks our joints face, and even the shape of our social world.

When I was at Cambridge, I had friends at Darwin College who were deep into paleoanthropology, and they treated upright walking with a near-religious seriousness. It wasn’t just another evolutionary detail. It was the event that set the entire human project in motion. The spine reorganizes, the pelvis narrows, the hands are freed, the skull rebalances, and suddenly you have a creature who sees differently, moves differently, and eventually thinks differently. Once you understand this pivot, the presence of twoness—paired structures, paired functions, paired risks—feels inevitable. It is written into the architecture of our skeletons long before it becomes a mental model.

The Price of Walking on Two Legs

Years later, I found myself on the freelance writing beat, assigned a run of podiatry and hip-replacement articles meant to boost the SEO of medical providers around Indianapolis. Every surgeon I interviewed confirmed what Darwin friends had said in a more theoretical way: hip deterioration isn’t a personal failure, and it isn’t a matter of lifestyle or luck. It is the predictable outcome of balancing an entire species on two load-bearing joints that were never designed for the workload we ended up giving them.

Those interviews made the anthropology lectures I’d overheard at Cambridge concrete. The same evolutionary shift that freed our hands for tools, expanded our range of travel, and eventually supported the development of complex intelligence also introduced a mechanical weakness at the heart of our locomotion. The story of bipedalism is often told as a triumph—a leap toward cognition, migration, coordination—but the body keeps the receipts.

We owe our cognitive advantages to the moment an early hominid stayed upright. The posture that enabled tool use and expanded our vision also concentrated movement into two joints with no evolutionary precedent for the load. The trait that ensured our survival is the same one that produces our most ordinary physical failures. Twoness isn’t just symmetry—it’s the fault line that shows what evolution gave us and what it demanded in return.

Our Symmetry, Our Fault Line

Twoness doesn’t just shape our bodies and reasoning; it shapes how we behave together. The same circuits that keep us balanced on two legs make us responsive to mirrored movements, call-and-response patterns, and the emotional force of acting in unison. Marching, chanting, clapping in time—these are not cultural accidents but binary loops built into our motor system, toggling between left and right, tension and release. Once a group falls into that rhythm, the pattern becomes its own logic.

Chanting and hypnosis draw on the same ancient circuitry. Give the brain a simple back-and-forth—two beats, two states, two breaths—and it begins to fall in step. Mantras, pendulums, spirals: each works by narrowing attention until the mind stops negotiating and simply follows the rhythm. Argument requires effort; repetition requires surrender.

The Politics of On/Off Thinking

After you notice how easily the nervous system locks into simple patterns, it becomes impossible not to see the same mechanism at work in politics. Modern discourse relies on binary shortcuts—safe/dangerous, credible/not credible, mainstream/conspiracy—that act less like judgments and more like switches, letting people sort ideas without confronting their complexity. The same twoness that keeps us walking in rhythm also makes us think in rhythm, repeating whatever categories the culture provides.

Nowhere is this clearer than in the way “conspiracy” is used as a reflexive dismissal. What began as a descriptor has hardened into a kill-switch that ends a conversation before it starts. The irony is that many political narratives function exactly like the conspiracies they condemn: tightly plotted stories with villains, destinies, and sweeping explanations of how the world works. Because they come from the in-group, they’re not seen as conspiratorial—only as truth.

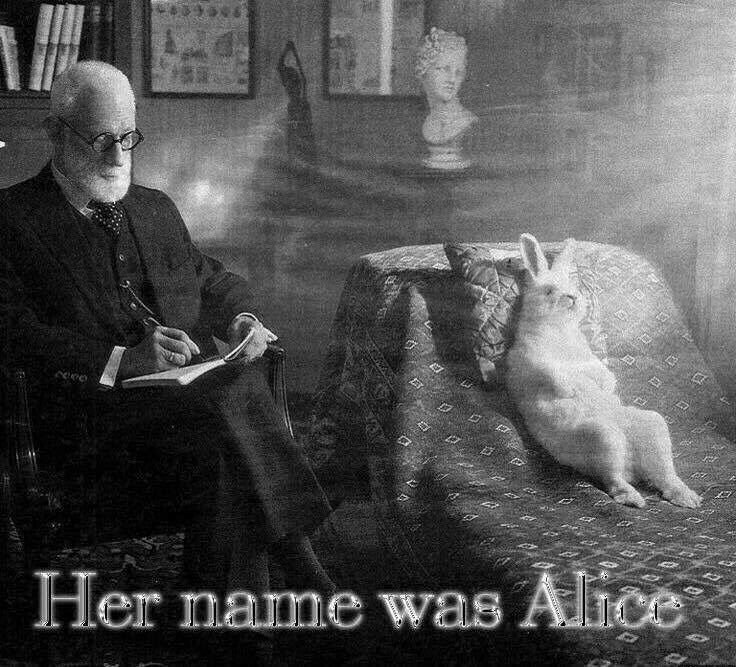

Once thought collapses into these two poles, the space between them fills with the logic the binaries can’t hold. Cognitive dissonance becomes comfortable; contradictory beliefs can sit side by side because the structure itself absorbs the tension. This is where Lewis Carroll becomes oddly useful: a world of paradox and nonsense emerges whenever a system insists on being too simple for the reality it claims to explain.

This collapse also betrays the Greek intellectual tradition we inherited. Aristotle built logic on distinctions, conditional reasoning, and hypothesis—provisional thinking, not reflexive dismissal. Yet in contemporary language, “conspiracy theory” has swallowed the entire category of hypothesis, as though an unverified idea were a moral offense. Binary logic—true/false, one/zero—was always meant as scaffolding, not a worldview. When a culture mistakes the skeleton for the full structure of thought, it loses the ability to evaluate ambiguity, early theories, historical analogies, or anything that resists instant classification. The binary does the sorting, and the mind stops doing the thinking.

Lewis Carroll understood better than almost anyone that a system built on rigid binaries eventually exposes its own absurdities. Long before Alice’s Adventures in Wonderland became a cultural shorthand for surrealism, Charles Dodgson—the Oxford mathematician behind the pen name—was publishing work on symbolic logic, syllogisms, and paradox. His Symbolic Logic (1896) and earlier papers demonstrate a meticulous mind fascinated by how small errors in reasoning can warp an entire system. Wonderland is not chaos for its own sake; it is what happens when logic is followed so strictly, or so literally, that it loops back into nonsense.

In Alice’s Adventures in Wonderland (1865) and Through the Looking-Glass (1871), Carroll builds worlds where binary categories are stretched until they break. Things are and are not. Directions reverse themselves. “Up” and “down” become interchangeable states, not opposites. The Cheshire Cat can disappear until only its grin remains—an ontological joke about predicates without subjects. The White Queen believes “six impossible things before breakfast,” a line that functions as both whimsy and a critique of anyone who treats belief as a binary rather than a spectrum. The Red Queen’s rule—“it takes all the running you can do to keep in the same place”—captures the experience of a system that moves but does not progress, a perfect metaphor for political discourse stuck between two immovable poles.

Carroll’s most explicit engagement with logical failure appears in “What the Tortoise Said to Achilles” (1895), a short dialogue published in Mind, in which the Tortoise exposes a paradox at the heart of deductive reasoning. Achilles presents a simple syllogism, but the Tortoise refuses to accept the conclusion unless each inferential step is itself turned into a new premise—and the regress never resolves. It’s a demonstration of how a system built too rigidly on formal logic can collapse under its own structure. The reader is left with the uncomfortable realization that logic alone cannot force acceptance; something extra-logical—intuition, agreement, shared understanding—must step in. In other words, even the most orderly systems need a space outside the binary.

This is precisely why Carroll is the perfect guide for understanding the weird cognitive zone between political binaries. Wonderland is not absurd because it lacks rules; it is absurd because its rules are too strict. It is a world where binary reasoning—true/false, big/small, sense/nonsense—applies cleanly until reality complicates it, and then everything fractures. Carroll shows how quickly a mind can grow comfortable with contradictions when it is forced to operate inside a framework that cannot accommodate nuance. When Alice asks questions that the system can’t process, she is told that the refusal to accept nonsense is the real problem.

In this way, Carroll anticipated a psychological pattern we can see clearly now: when a culture demands that people choose between two fixed narratives, all the discarded reasoning, inconvenient evidence, and unapproved hypotheses get pushed underground. They don’t disappear; they accumulate. They form a Wonderland of their own—a space where banned questions go, where contradictions coexist without resolution, where the logic cast out by the binary finds a strange new coherence. This is not chaos from the absence of structure; it is chaos produced by too much structure, the way a poorly written program enters an infinite loop not because it is disordered, but because it is too rigid to escape itself.

Carroll’s work suggests that nonsense is not the opposite of logic. It is what happens when logic is applied beyond its natural limits—when the world’s complexity is filtered through an on/off switch that cannot register anything in between. And this, ultimately, is why so much modern discourse feels like Wonderland: not because people are irrational, but because they are using a system of reasoning that is far too simple for the problems they are trying to understand.

Conclusion: The Limits of Two

If there is one lesson that ties all of this together—from Aristotle’s conditional clauses to the symmetry of our skeletons, from bipedal strain to political slogans, from the pendulum’s swing to Alice chasing a vanishing grin—it is that binary systems are powerful precisely because they are simple. They help us walk, breathe, chant, categorize, and compute. They let us build machines that reason, or at least perform something close enough to reason that we mistake it for intelligence. But the simplicity that makes binaries so efficient is also what makes them dangerous. They tempt us into believing that the world itself runs on clean divisions: true or false, safe or unsafe, credible or conspiratorial.

In reality, most of what matters lives in the space between. Hypotheses, early-stage ideas, historical analogies, political comparisons, uncomfortable intuitions—these are all fragile forms of thinking that require room to unfold. When a culture collapses everything into two poles, it doesn’t eliminate complexity; it just forces complexity underground, where it mutates into confusion, contradiction, or the kind of nonsense Carroll understood so well. A binary system can tell us whether a statement fits within its parameters, but it cannot tell us whether the parameters are adequate to the world.

To recognize this is not to abandon logic, but to remember what logic was originally for: to help us refine our questions, not silence them. Aristotle left room for uncertainty; Heraclitus insisted on flux; Carroll exposed the absurdity that appears when rules overreach. Even our own bodies, balanced precariously on two legs, remind us that evolution is not a clean progression but a series of trade-offs. Twoness is part of us, but it is not all of us.

We outgrow binaries not by rejecting them, but by seeing their limits. The mind becomes freer the moment it notices when the switch has been flipped on its behalf—when “conspiracy theory” is being used as a way to end thought rather than begin it, when a comparison is dismissed before the reasoning can be heard, when an idea is forced into a category too small to contain it. The world is irreducibly complex, and any system that insists otherwise will eventually turn itself inside out, like Wonderland following its own rules to the point of absurdity.

If there is a way forward, it begins where the binary ends: with the willingness to let a thought be unfinished, a theory be tentative, a question be unsettling. The space between two poles is not a void. Binaries are tools; problems arise only when we mistake them for reality.

Works Cited

- Aristotle. Categories. Translated by J. L. Ackrill, Clarendon Press, 1963.

- Aristotle. De Interpretatione. Translated by E. M. Edghill,

in The Works of Aristotle, edited by W. D. Ross, vol. 1,

Oxford University Press, 1908. - Carroll, Lewis. Alice’s Adventures in Wonderland. Macmillan, 1865.

- Carroll, Lewis. Through the Looking-Glass, and What Alice Found There.

Macmillan, 1871. - Carroll, Lewis. “What the Tortoise Said to Achilles.” Mind,

vol. 4, no. 14, 1895, pp. 278–280. - Carroll, Lewis. Symbolic Logic. Macmillan, 1896.

- Mastronarde, Donald J. Introduction to Attic Greek.

University of California Press, 1993.